I spent the better part of 2022 working with a healthcare consortium that wanted to run machine learning models across patient data from six different hospital systems. The catch: none of them could legally share raw patient data with each other, and nobody trusted a single cloud provider to see it all in plaintext. We needed a way to process sensitive data without anyone, not even the cloud provider, being able to read it while it was being computed on. That project was my deep introduction to confidential computing, and it changed how I think about data security in the cloud.

The Missing Piece in Data Protection

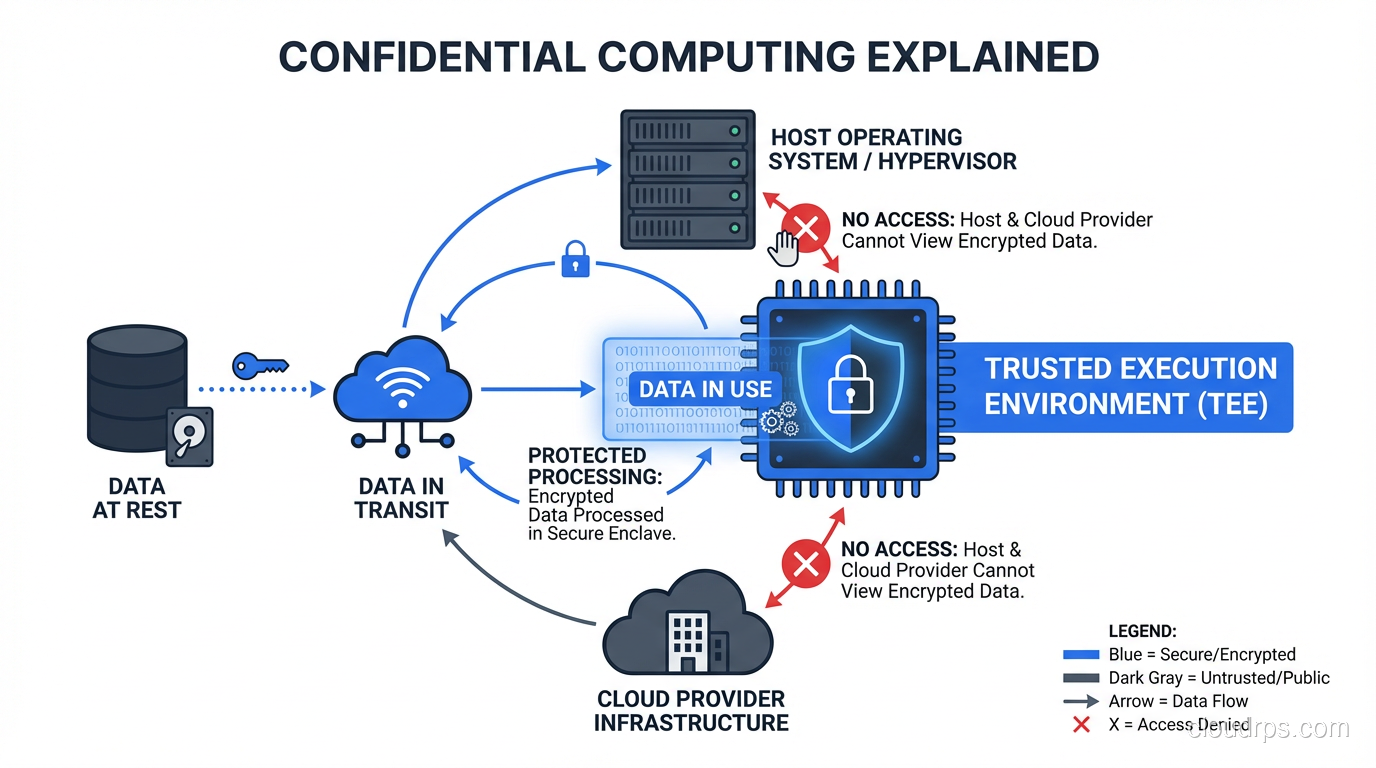

If you have been working in cloud security for any length of time, you know the standard playbook. You encrypt data at rest with AES-256 so nobody can read your storage volumes. You encrypt data in transit with TLS so nobody can sniff it off the wire. But what happens when your application actually needs to use that data? It gets decrypted into memory, processed by the CPU, and sits there in plaintext. That is the gap.

For decades, we just accepted this as a fundamental limitation. If you wanted to compute on data, it had to be unencrypted in memory. Your database had to decrypt rows to run queries. Your ML model had to see plaintext features to make predictions. And anyone with privileged access to the host, whether that is a rogue sysadmin, a compromised hypervisor, or a nation-state adversary with a court order, could potentially read that data right out of memory.

Confidential computing closes that gap. It protects data in use, the third and final state of data, by leveraging hardware-based isolation to create secure enclaves where data can be processed without being exposed to the rest of the system. Not to the operating system, not to the hypervisor, not even to the cloud provider’s own staff.

How Trusted Execution Environments Actually Work

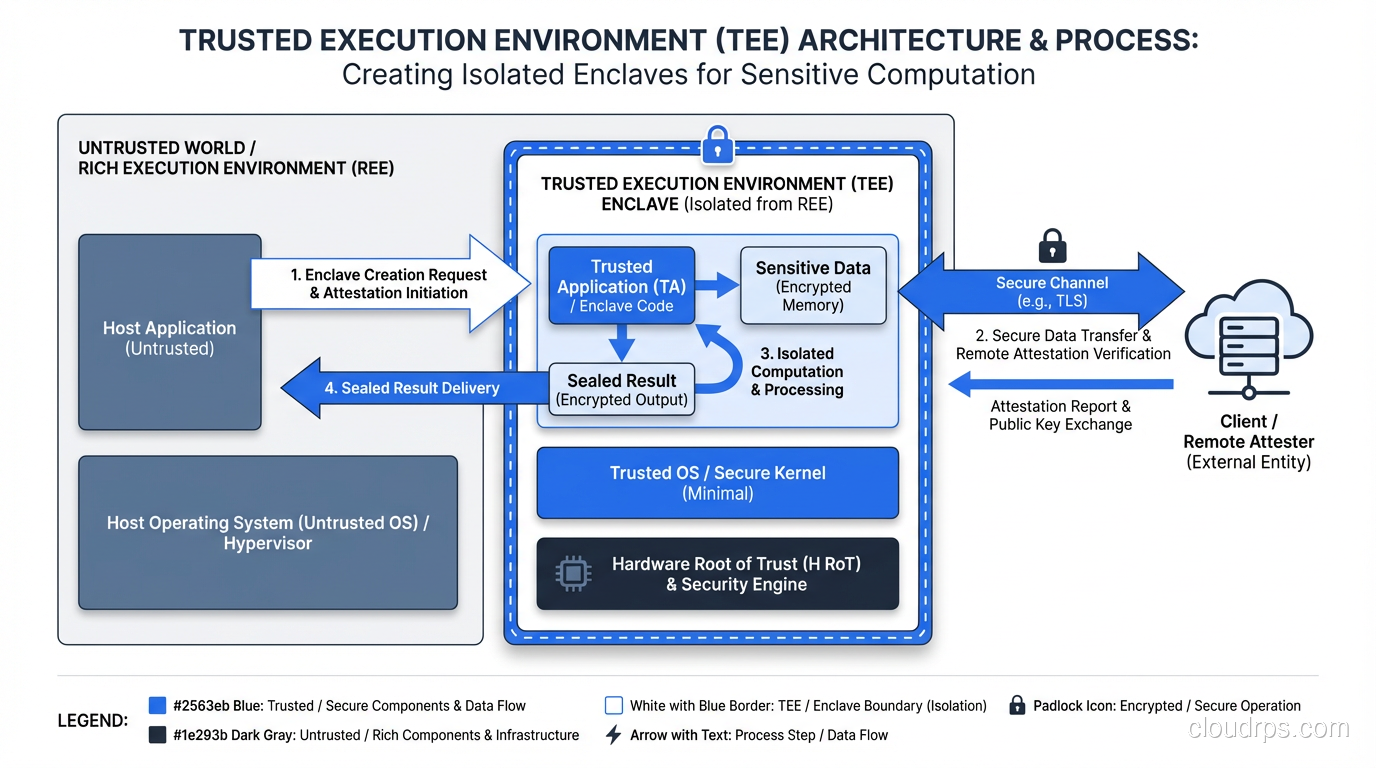

A Trusted Execution Environment (TEE) is a hardware-enforced isolated area within a processor that guarantees code and data loaded inside it are protected with respect to confidentiality and integrity. That sounds academic, so let me break down what actually happens at the hardware level.

When you create a TEE, the processor carves out a region of memory that is encrypted with keys that only the CPU itself knows. The encryption and decryption happen inside the CPU package, on the memory bus, before data ever hits DRAM. This means that even if someone physically pulls the memory DIMMs out of the server and puts them under an electron microscope, they see nothing but ciphertext.

The key properties of a TEE are:

Isolation: Code and data inside the TEE cannot be read or modified by any code outside it, including the operating system, hypervisor, BIOS, or firmware. The CPU hardware enforces these boundaries.

Attestation: Before you send sensitive data to a TEE, you can cryptographically verify that the enclave is running the exact code you expect, on genuine hardware, with the security properties you require. This is like a hardware-signed proof that says “yes, this is a real Intel SGX enclave running SHA-256 hash XYZ of your code.”

Sealing: TEEs can encrypt data to persistent storage in a way that only that specific enclave on that specific platform can decrypt it later. This lets enclaves maintain state across restarts.

Memory encryption: All data in the TEE’s memory region is encrypted transparently by the CPU. The encryption uses hardware-accelerated symmetric algorithms that add minimal latency to memory operations.

The attestation piece is what makes this truly powerful for cloud scenarios. In a traditional cloud computing setup, you have to trust the cloud provider completely. With TEE attestation, you can verify that your workload is running in a genuine secure enclave before you hand over any sensitive data. Trust shifts from “I trust AWS/Azure/GCP’s employees” to “I trust the laws of mathematics and semiconductor physics.”

The Three Approaches: Intel SGX, AMD SEV, and ARM TrustZone

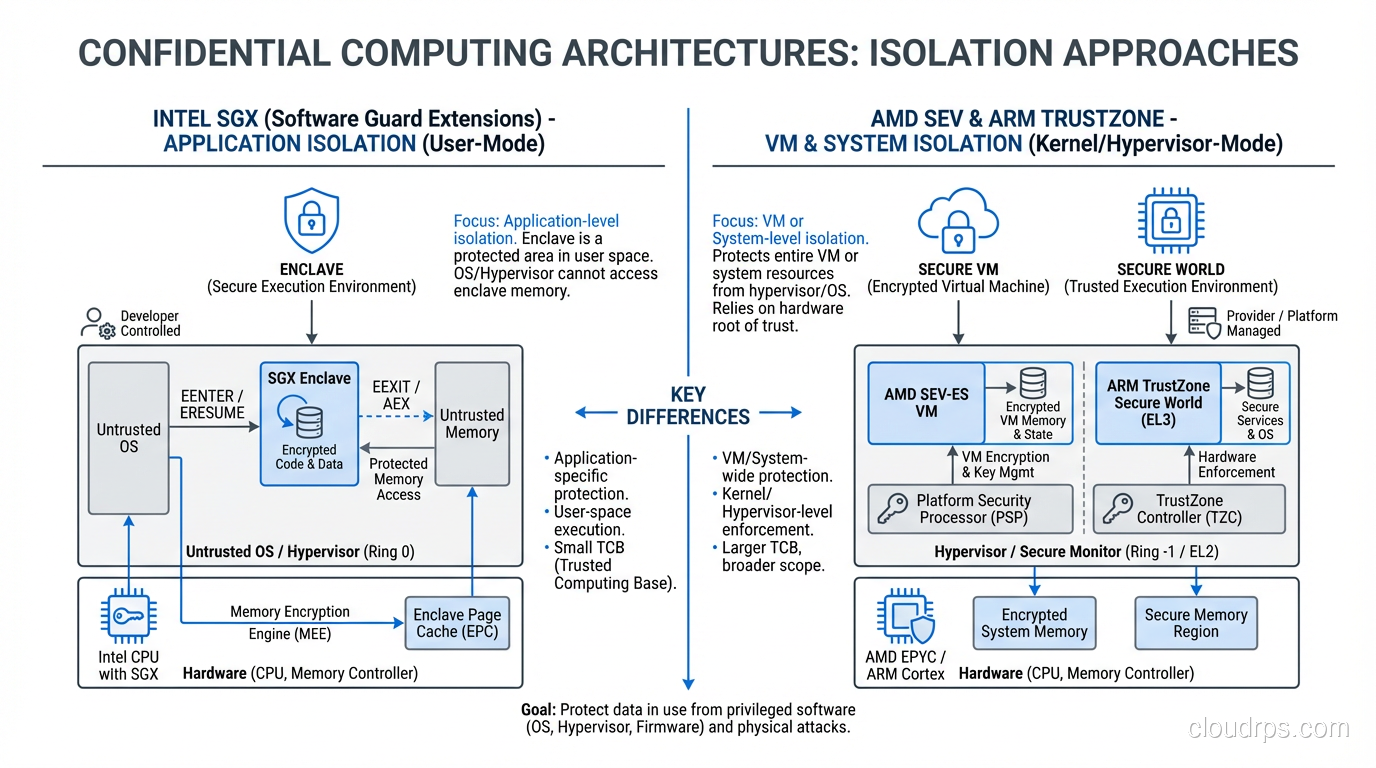

Not all TEEs are created equal. The three major chip vendors have taken very different approaches to confidential computing, and the differences matter for how you architect your systems.

Intel SGX (Software Guard Extensions)

Intel SGX works at the application level. You define specific “enclaves” within your application, and only the code and data inside those enclaves get hardware protection. Think of it as a vault inside your application: you explicitly move sensitive data in, process it, and move results out.

The good: SGX provides the smallest possible trusted computing base (TCB). Since only your enclave code runs in the protected environment, not the entire OS or hypervisor, the attack surface is minimal. If the OS is compromised, your enclave data is still safe.

The bad: You have to rewrite your application to be “enclave-aware.” You cannot just take an existing binary and run it in SGX (well, you sort of can with frameworks like Gramine and Occlum, but there are limitations). Enclave memory is also limited, historically to 128-256MB of encrypted memory, though newer versions have expanded this significantly.

I have seen teams spend months porting applications to SGX only to discover that their workload’s memory access patterns caused severe performance degradation due to EPC (Enclave Page Cache) paging. If your data set exceeds the EPC size, the CPU has to encrypt and decrypt pages as they swap in and out, which can tank throughput by 10x or more.

AMD SEV (Secure Encrypted Virtualization)

AMD took a completely different approach. Instead of protecting individual application enclaves, SEV encrypts the entire virtual machine’s memory. Each VM gets its own encryption key, managed by a dedicated security processor, and neither the hypervisor nor other VMs can read its memory.

SEV has evolved through several generations. The original SEV encrypted VM memory but did not protect register state. SEV-ES (Encrypted State) added register encryption. SEV-SNP (Secure Nested Paging) added integrity protection to prevent the hypervisor from replaying, remapping, or corrupting encrypted memory pages.

The good: No application changes required. Your existing VMs, operating systems, and applications run unmodified inside a confidential VM. This is a massive advantage for adoption because you do not have to rewrite anything.

The bad: The trusted computing base is much larger than SGX. You are trusting the entire guest OS kernel, all drivers, and all application code inside the VM. If someone compromises your guest OS, confidential VM or not, they can read your data. SEV protects you from the cloud provider and the hypervisor, but not from vulnerabilities within your own VM.

For most enterprise use cases, I recommend AMD SEV-SNP as the starting point. The “no code changes” story is just too compelling, and the threat model (protecting data from the cloud provider) is the one most customers actually care about.

ARM TrustZone

ARM TrustZone splits the processor into two worlds: a “secure world” and a “normal world.” It is primarily used in mobile devices, IoT, and embedded systems rather than cloud servers. You will encounter it in smartphones (it is how your phone stores biometric data and cryptographic keys) and in edge computing scenarios.

TrustZone is less relevant for cloud workloads, but it is worth understanding because it shows up in edge computing architectures where you need to process sensitive data on IoT devices before sending results to the cloud.

Cloud Provider Confidential Computing Offerings

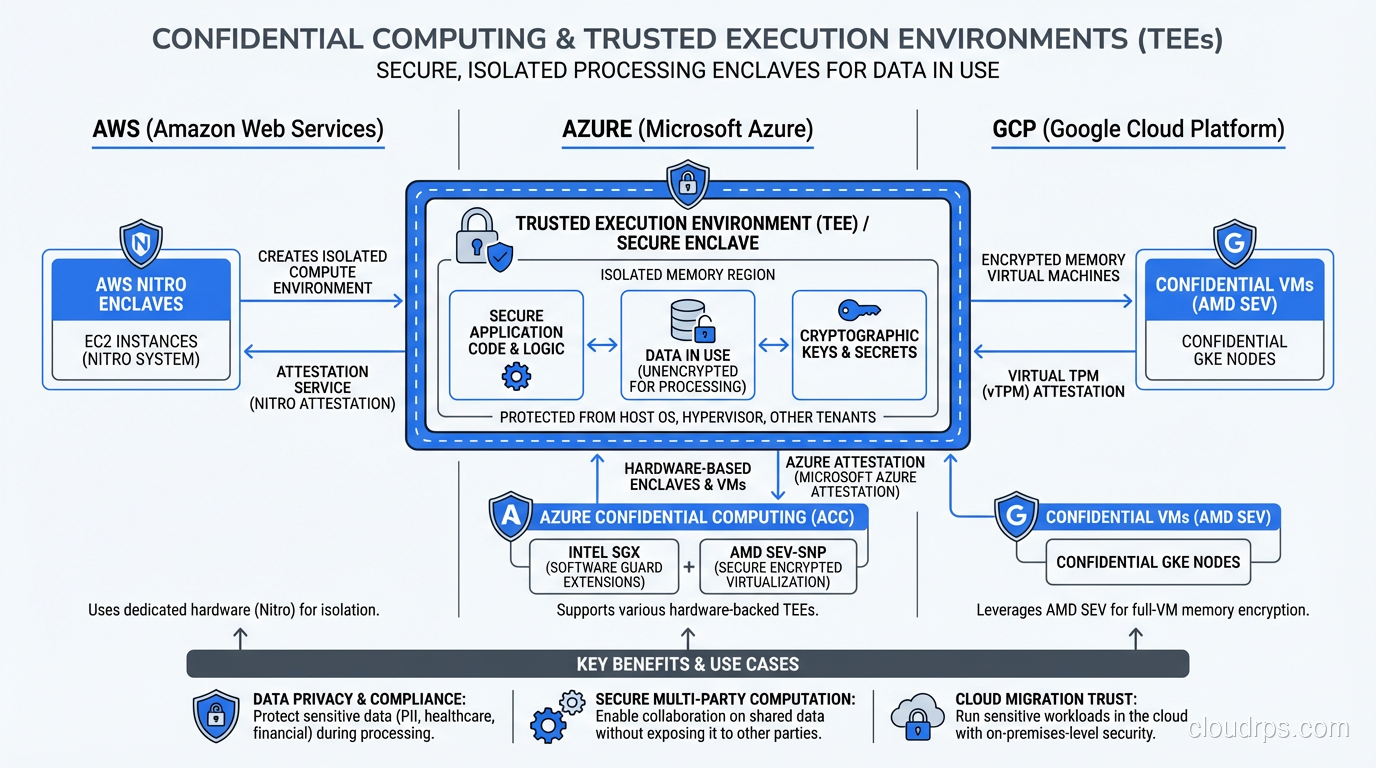

All three major cloud providers now offer confidential computing services, but the implementations and maturity levels vary significantly.

Azure Confidential Computing

Microsoft was the first cloud provider to go big on confidential computing, and it shows. Azure offers both SGX-based confidential computing (DCsv2 and DCsv3 instances) and AMD SEV-SNP based confidential VMs. They also have confidential containers on AKS, confidential ledger (a tamper-proof data store), and Always Encrypted with secure enclaves for SQL Server.

Azure’s investment here is the deepest. If confidential computing is a hard requirement, Azure should be your first stop. Their documentation, tooling, and partner ecosystem around this are ahead of the other providers.

GCP Confidential VMs

Google Cloud offers Confidential VMs powered by AMD SEV-SNP on N2D and C2D instances. They also have Confidential GKE Nodes for running Kubernetes workloads in confidential VMs and Confidential Space for multi-party computation scenarios.

Google’s approach leans heavily on AMD SEV, which means you get the “no code changes” benefit. In practice, turning on a Confidential VM in GCP is almost as simple as checking a box when you create an instance. The performance overhead is typically 2-6% for most workloads, which is remarkably low.

AWS Nitro Enclaves

AWS took a different path with Nitro Enclaves. Rather than using Intel SGX or AMD SEV, they built their own isolation technology on top of the Nitro hypervisor. A Nitro Enclave is an isolated virtual machine with no persistent storage, no network access, and no interactive access. It communicates with the parent instance only through a constrained vsock connection.

Nitro Enclaves are not technically TEEs in the traditional sense since the isolation is provided by the Nitro hypervisor rather than CPU-level memory encryption. However, AWS argues that since Nitro is a purpose-built, minimal, formally verified hypervisor, the security properties are equivalent. This is a valid engineering argument, but it does mean you are trusting AWS’s implementation rather than silicon-level guarantees.

AWS also offers AMD SEV-SNP based instances (M6a, C6a, R6a with SNP support), so you can get hardware-based confidential computing on AWS as well.

Real-World Use Cases

Let me share some scenarios where I have seen confidential computing deliver real value, versus where it was security theater.

Multi-Party Computation

This was my healthcare consortium project. Six hospitals needed to train a model on combined patient data without any single party seeing the others’ data. We used confidential VMs where the training code ran inside a TEE, with attestation proving to each hospital that only the approved training code could access their data. The model parameters went in, training happened inside the enclave, and only the resulting model came out. No hospital ever had to share raw records.

This pattern works for any scenario where multiple organizations need to compute over shared sensitive data: financial fraud detection across banks, supply chain optimization across competitors, or genomic research across institutions.

Regulatory Compliance

A European fintech I worked with had to process payment data in a cloud environment while satisfying their regulator that even the cloud provider could not access the data. Zero trust security principles got them most of the way there, but the regulator specifically asked about memory-level protection. Confidential VMs with attestation reports gave the compliance team a concrete, auditable artifact to hand over.

Secret Processing

When you need to process secrets and credentials in an environment where you do not fully trust the infrastructure layer, TEEs shine. Think about key management systems, certificate authorities, or credential vaults that need to operate in shared cloud environments. Running your HashiCorp Vault in a confidential VM adds a genuine layer of protection for the master keys.

Where You Probably Do Not Need It

I have also talked teams out of using confidential computing. If your threat model is “external hackers trying to break in through the network,” standard security controls, firewalls, proper access management, encryption at rest and in transit, are the right answer. Confidential computing protects against insider threats and infrastructure-level compromise, which are real but not universal concerns.

If you are running a SaaS application in a multi-tenant architecture and your concern is tenant isolation, confidential computing is overkill. Proper virtualization and hypervisor-level isolation with good network segmentation handles that threat model at much lower cost and complexity.

Performance Overhead and Practical Trade-offs

Let me be honest about the costs because I have seen vendor marketing gloss over these.

AMD SEV-SNP confidential VMs add roughly 2-6% overhead for most workloads. This comes primarily from memory encryption/decryption on every memory access. Memory-intensive workloads (large in-memory databases, big data processing) will see higher overhead than compute-bound workloads. For most applications, you will not notice the difference.

Intel SGX enclaves have higher and more variable overhead. If your working set fits in the EPC, overhead is around 5-15%. If it does not fit, and the CPU has to page encrypted memory in and out, you can see 2-10x slowdowns. I have seen teams budget 20% overhead for SGX and end up at 200% because they did not account for EPC pressure.

Attestation adds latency to application startup and to any workflow where you verify enclave integrity before sending data. Budget 100-500ms for remote attestation depending on the implementation.

Development complexity is the hidden cost. For SGX, you need developers who understand enclave programming, which is a niche skill set. For SEV-based confidential VMs, the development overhead is minimal since your code runs unmodified, but you still need to understand attestation workflows and secure boot chains.

Cost: Confidential VM instances typically cost 5-10% more than their non-confidential equivalents. SGX-capable instances can be 20-30% more expensive and have limited availability in certain regions.

My rule of thumb: if you are evaluating confidential computing, start with AMD SEV-SNP confidential VMs. The overhead is low, the compatibility is high, and you can migrate existing workloads without rewrites. Only go to SGX if you need application-level enclaves with a minimal TCB, which usually means you are building a security product, not deploying a business application.

Getting Started: A Practical Roadmap

If you have read this far and think confidential computing is relevant to your workloads, here is how I would approach adoption.

Step 1: Define your threat model. Be specific. Are you protecting against a malicious cloud provider admin? A compromised hypervisor? A regulatory requirement? A contractual obligation with a partner? The threat model determines whether you need TEE-level protection or whether standard encryption is sufficient.

Step 2: Start with a confidential VM. Pick one workload that handles your most sensitive data. Deploy it in an AMD SEV-SNP confidential VM on your cloud provider of choice. Measure the performance overhead against your baseline. In most cases, you will be pleasantly surprised.

Step 3: Implement attestation. This is where the real security value comes from. Set up remote attestation so that before any sensitive data enters the confidential VM, the sending party can verify the VM is running approved code on genuine hardware. Without attestation, you have encrypted memory but no proof of what is running inside it.

Step 4: Integrate with your security toolchain. Feed attestation reports into your SIEM. Add confidential computing verification to your CI/CD pipeline. Make attestation part of your compliance audit trail.

Step 5: Evaluate SGX for high-value targets. If you have specific workloads (key management, multi-party computation protocols, or sensitive algorithms) where you need enclave-level isolation, now is the time to investigate SGX or its successors.

The Future of Confidential Computing

The Confidential Computing Consortium (part of the Linux Foundation) is driving standardization across the industry. Intel’s successor to SGX, called TDX (Trust Domain Extensions), brings VM-level isolation similar to AMD SEV but with Intel’s attestation infrastructure. ARM is also moving into the server space with CCA (Confidential Compute Architecture).

What excites me most is the convergence happening. Early confidential computing required choosing between application-level enclaves (high security, high friction) and VM-level encryption (lower friction, larger TCB). Newer technologies like Intel TDX and ARM CCA are finding middle ground with better isolation at the VM level while maintaining the ease of deployment.

We are also seeing confidential computing become a building block for privacy-preserving AI. Training models on sensitive data without exposing it, running inference on encrypted inputs, and enabling federated learning with hardware-backed guarantees. The healthcare project I mentioned at the start of this article was just the beginning.

Confidential computing is not a silver bullet, and it is not something every workload needs. But for organizations handling regulated data, participating in multi-party data collaborations, or operating in environments where they cannot fully trust the infrastructure provider, it fills a real and important gap. It is the final piece of the data protection puzzle: rest, transit, and now use.

Get Cloud Architecture Insights

Practical deep dives on infrastructure, security, and scaling. No spam, no fluff.

By subscribing, you agree to receive emails. Unsubscribe anytime.