In 2008, I had a conversation with a CTO that changed how I think about infrastructure economics. He asked me why their SaaS platform was losing money despite growing revenue. The answer was simple: they were running a separate database server for every customer. 340 customers, 340 PostgreSQL instances, 340 sets of backups, 340 things to patch. The operational cost was eating them alive.

That was my introduction to the multi-tenancy problem, and it’s one of the most consequential architecture decisions you’ll make if you’re building a cloud platform or SaaS product. Get it right and you get efficient operations with manageable costs. Get it wrong and you end up with either a security nightmare or an operational money pit.

What Multi-Tenancy Actually Means

Multi-tenancy means multiple customers (tenants) share the same infrastructure, platform, or application. Their data coexists in the same systems, but each tenant should only see and access their own data.

Every cloud service you use is multi-tenant. Your EC2 instance runs on a physical host shared with other AWS customers. Your RDS database runs on shared hardware. Your S3 buckets sit on the same storage infrastructure as millions of other accounts. The cloud’s economic model depends entirely on multi-tenancy.

The alternative, single-tenancy, means each customer gets dedicated infrastructure. Their own servers, their own databases, their own everything. This provides maximum isolation but at enormous cost. It’s the difference between an apartment building and a custom-built house. Both provide shelter, but the economics are fundamentally different.

The Multi-Tenancy Spectrum

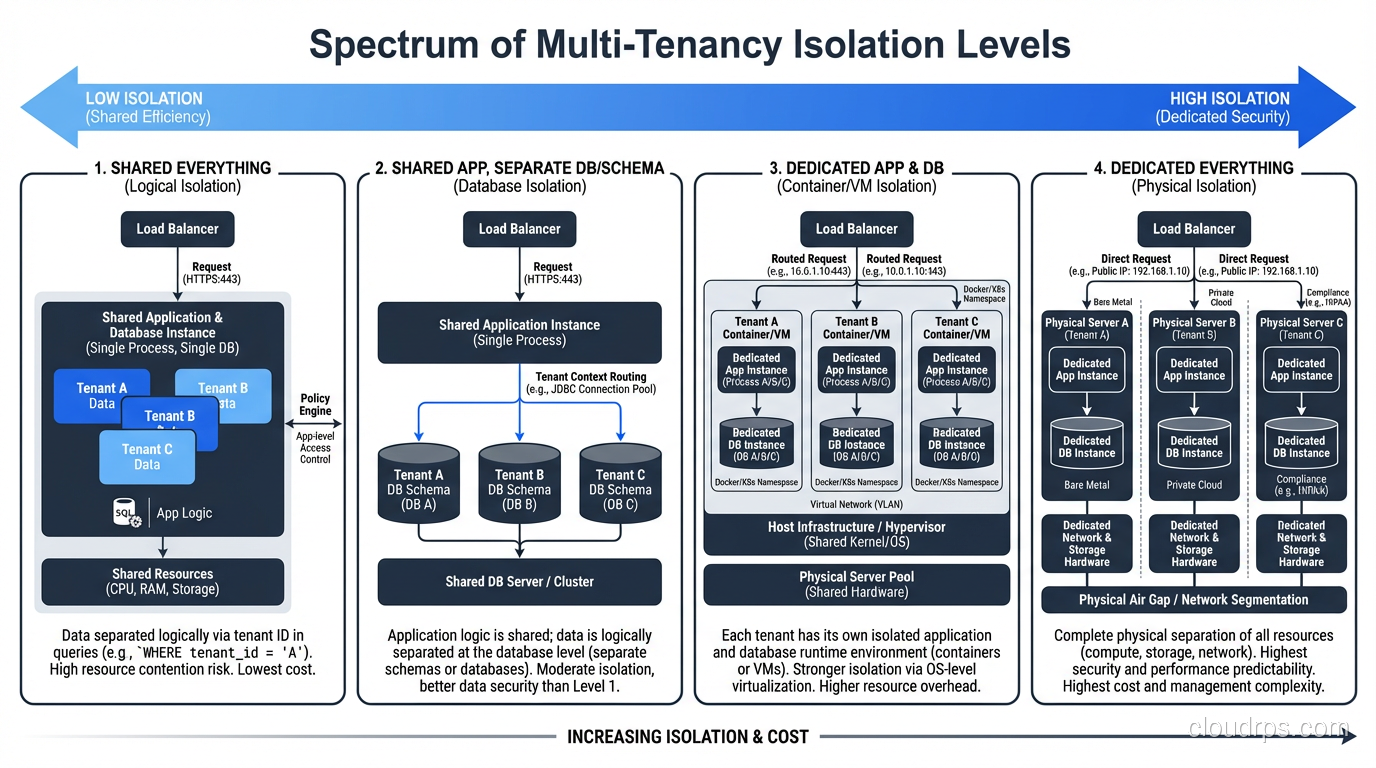

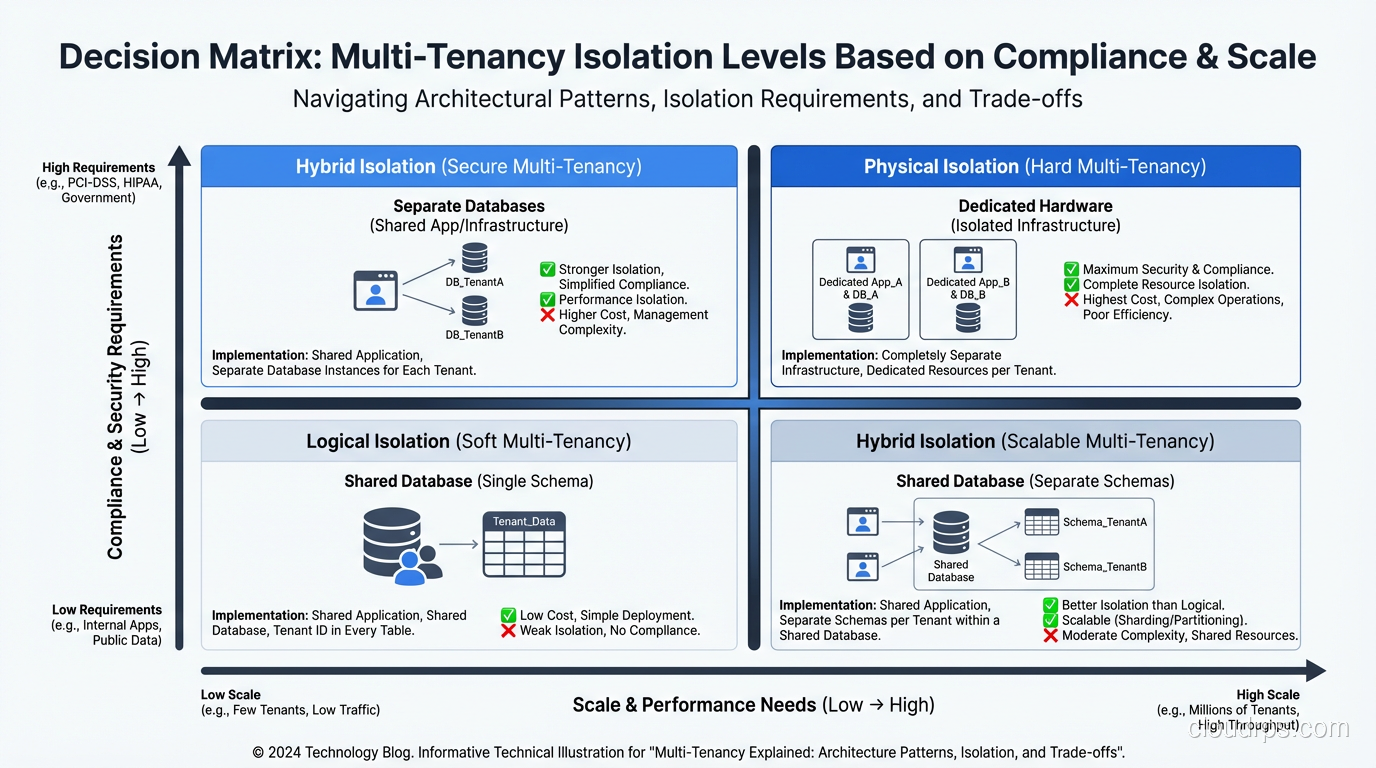

Multi-tenancy isn’t binary. There’s a spectrum of isolation levels, and understanding where you sit on that spectrum matters enormously.

Level 1: Shared Everything

All tenants share the same application instances, the same database, the same tables. Tenant separation is enforced at the application layer, typically through a tenant_id column on every table and middleware that filters queries.

Pros: Maximum resource efficiency. Minimum operational overhead. One database to back up, one application to deploy, one system to monitor.

Cons: One bug in your tenant filtering logic and Customer A sees Customer B’s data. I’ve seen this happen. More than once. It’s career-limiting for whoever wrote the broken query.

Level 2: Shared Application, Separate Databases

All tenants share the same application tier, but each tenant gets their own database (or schema). The application routes requests to the appropriate database based on tenant identity.

Pros: Stronger data isolation. A rogue query can’t accidentally cross tenant boundaries at the database level. Easier to comply with data residency requirements.

Cons: More databases to manage. Connection pooling gets complicated. Database migrations need to be applied to every tenant database. Schema drift becomes a real problem if you’re not disciplined.

Level 3: Shared Infrastructure, Separate Stacks

Each tenant gets their own application instances and database, but they run on shared infrastructure (shared Kubernetes cluster, shared network, shared management plane).

Pros: Strong isolation at the application level. One tenant’s performance issues don’t directly affect others. Easier to customize per tenant.

Cons: Significant operational overhead. You’re essentially running N copies of your application. Deployments multiply. Monitoring complexity increases linearly with tenant count.

Level 4: Dedicated Infrastructure

Each tenant gets dedicated infrastructure: their own VMs, their own network segments, potentially their own AWS accounts. Maximum isolation.

Pros: Strongest possible isolation. Complete blast radius containment. Meets the strictest compliance requirements.

Cons: Terrible economics. Doesn’t scale operationally. This is the 340-database-servers situation from my story.

The Isolation vs. Efficiency Trade-Off

Here’s the core tension: more isolation means less efficiency. This isn’t a problem to solve; it’s a trade-off to navigate.

When I advise clients on multi-tenancy strategy, I start with three questions:

What are your compliance requirements? Some regulations (HIPAA, FedRAMP, certain financial services rules) have specific requirements about data isolation. These requirements aren’t negotiable. They set a floor for your isolation level.

What’s your tenant profile? If all your tenants are similar in size and usage patterns, shared-everything works well. If you have a mix of small tenants and whale tenants who process 1000x more data, you need isolation to prevent noisy-neighbor problems.

What’s your operational capacity? More isolation means more things to manage. If you have a two-person ops team, running dedicated infrastructure per tenant will break them.

The right answer is usually somewhere in the middle of the spectrum, and it often varies by component. I’ve built systems where the application tier was fully shared but the database was per-tenant, or where small tenants shared infrastructure while enterprise tenants got dedicated deployments.

The Noisy Neighbor Problem

This is the most common multi-tenancy issue I deal with, and it’s directly related to how virtualization and hypervisors manage shared resources.

In a shared-everything architecture, one tenant’s behavior affects all tenants. A tenant running a massive report can consume database CPU and slow down everyone. A tenant receiving a traffic spike can saturate application server resources. A tenant with a runaway process can exhaust memory.

The cloud providers deal with this at the infrastructure level through resource isolation in the hypervisor. But at the application level, you have to build your own guardrails:

Rate limiting: Cap the number of API calls per tenant per time period. This is table stakes. If you’re not rate limiting, you’re one bad actor away from a platform-wide outage.

Resource quotas: Limit how much storage, compute, or database capacity each tenant can consume. Hard limits prevent any single tenant from monopolizing shared resources.

Tenant-aware scheduling: For batch processes, use fair-share scheduling that prevents one tenant’s workload from starving others.

Performance isolation: In the database, this might mean connection limits per tenant, query timeout enforcement, or separate connection pools. At the application tier, it might mean separate thread pools or worker processes per tenant.

I once had a SaaS platform where a single tenant started running an export job that joined six tables with millions of rows. No limit on query complexity. The query consumed 100% of database CPU for 45 minutes. Every other tenant experienced timeouts. We implemented query complexity analysis and per-tenant CPU quotas the next sprint.

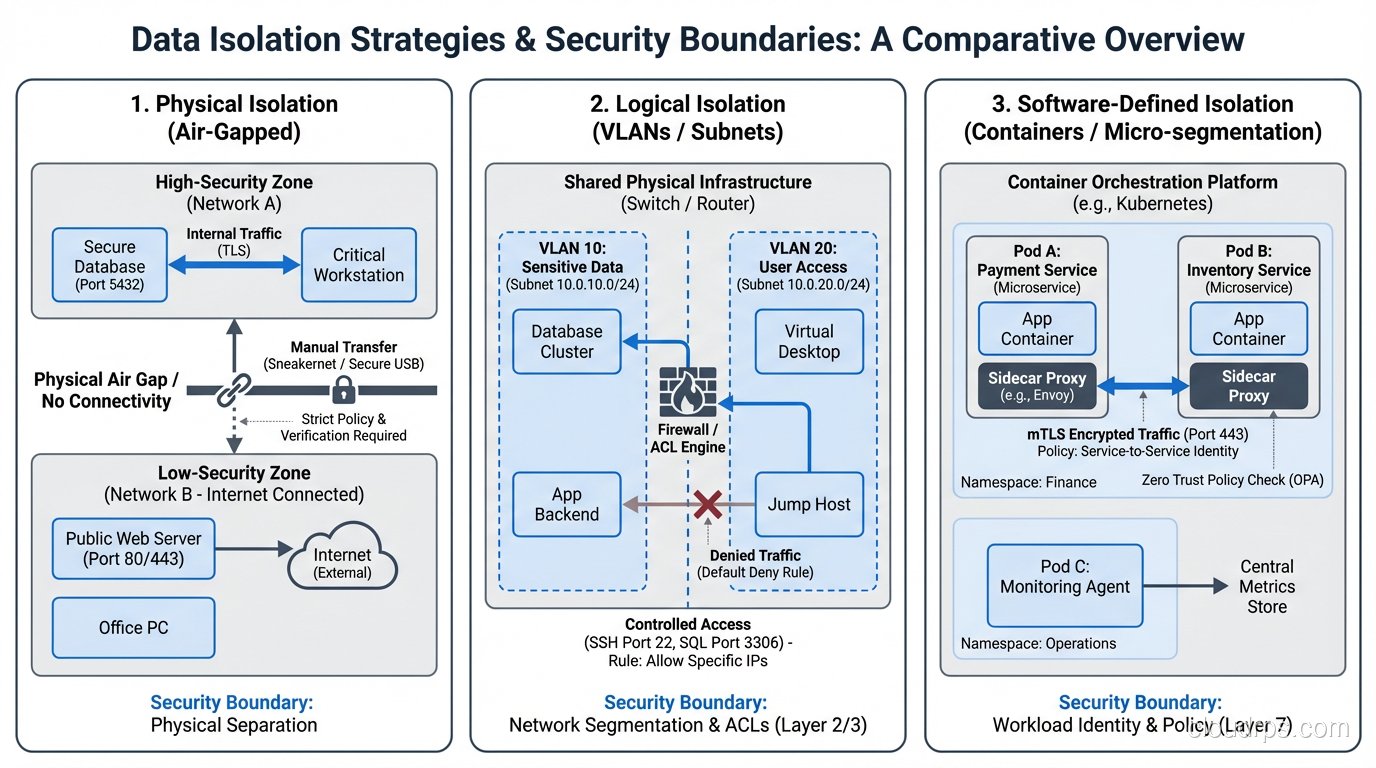

Data Isolation Patterns

Data isolation is where the stakes are highest. A performance issue is embarrassing. A data leak is existential.

Row-Level Security

In the shared-database model, every row has a tenant identifier. All queries include a tenant filter. PostgreSQL’s Row Level Security (RLS) feature can enforce this at the database level, providing a safety net beyond application-layer filtering.

I strongly recommend database-level enforcement (RLS or equivalent) as a backstop, even if your application layer handles tenant filtering. Defense in depth. Application bugs happen. ORM query builders can produce unexpected SQL. Having the database enforce tenant boundaries catches mistakes that application-level filtering misses.

Schema Isolation

Each tenant gets their own database schema within a shared database instance. Application code switches schemas based on tenant context. This provides stronger isolation than row-level security, since a query in Tenant A’s schema physically cannot access Tenant B’s tables.

The operational trade-off is schema management. Every migration runs N times. Schema drift between tenants becomes possible if migrations fail partially.

Database Isolation

Each tenant gets their own database instance. Maximum data isolation, clear blast radius, easy to reason about. But you need automation to manage the fleet: provisioning, patching, backups, monitoring, all multiplied by tenant count.

Encryption Isolation

Regardless of your tenancy model, encrypt data at rest with per-tenant keys. If Tenant A’s data is encrypted with Key A, even a catastrophic application bug that bypasses tenant filtering still produces encrypted gibberish when trying to read Tenant B’s data.

This is one of my non-negotiable recommendations. Per-tenant encryption keys add complexity but provide a last line of defense that has saved me more than once during security assessments.

Network Isolation in Multi-Tenant Systems

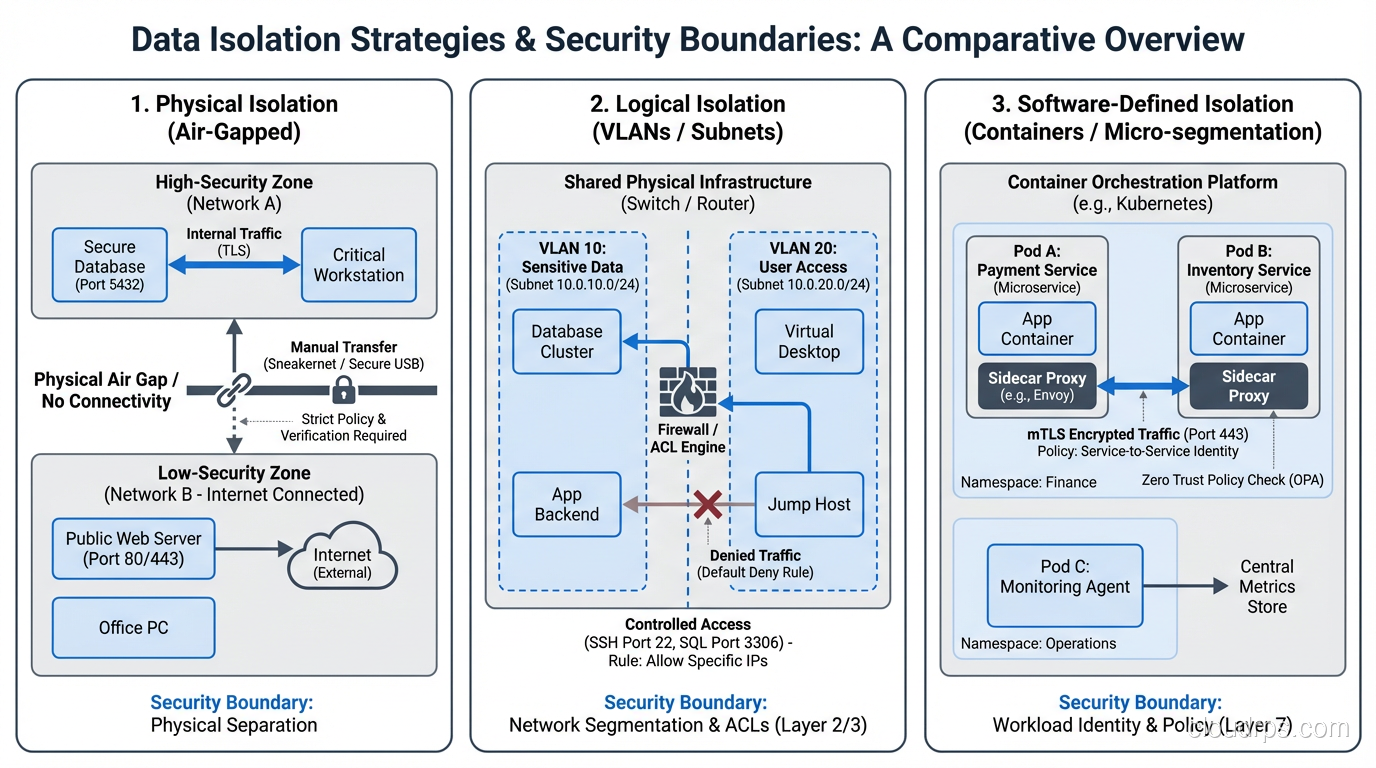

Network isolation is often overlooked in multi-tenant architectures, but it’s critical for security.

In cloud environments, you have several options:

Shared VPC with security groups: All tenants in the same virtual network, with security groups controlling traffic flow. Simple but limited, since security groups can’t prevent traffic between resources in the same group.

Separate subnets per tenant: Each tenant gets their own subnet within a shared VPC. Network ACLs can restrict inter-tenant traffic. Better isolation, manageable complexity.

Separate VPCs per tenant: Maximum network isolation. Each tenant’s infrastructure exists in its own virtual network with no connectivity to other tenants. This is the nuclear option: very strong isolation, very high operational overhead.

Service mesh with mTLS: In microservices architectures, a service mesh can enforce tenant-level network policies with mutual TLS between services. This provides fine-grained, identity-based network isolation without the overhead of separate networks.

Operational Patterns for Multi-Tenant Systems

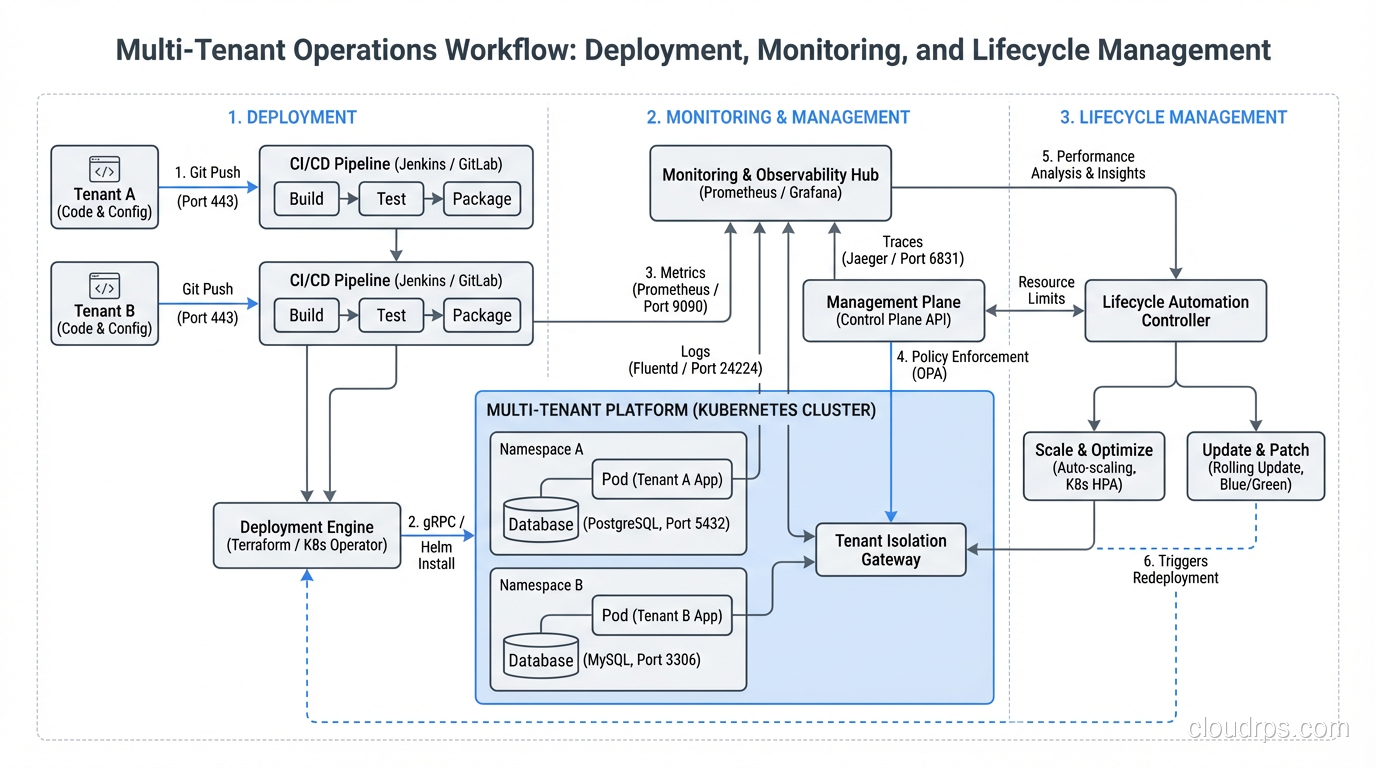

Deployment Strategies

In a shared-everything architecture, you deploy once and all tenants get the update. Fast, simple, but risky since a bad deployment affects everyone simultaneously.

In per-tenant deployment models, you can use canary deployments: update a few tenants first, validate, then roll out to the rest. This is safer but operationally complex.

The pattern I’ve landed on for most systems: shared infrastructure with tenant-aware feature flags. Deploy the same code to all tenants, but use feature flags to enable new functionality per tenant. This gives you the operational simplicity of shared deployments with the safety of gradual rollout.

Monitoring and Alerting

Your monitoring must be tenant-aware. Global averages hide problems. If 99 tenants are fine and 1 tenant is experiencing 100% error rates, your global error rate looks great at ~1%. But that one tenant is having a terrible day.

Break down every metric by tenant. Alert on per-tenant thresholds, not just global ones. This requires more monitoring infrastructure but catches problems that global monitoring misses entirely.

Tenant Onboarding and Offboarding

Automating tenant lifecycle management is critical. Provisioning a new tenant should be an API call, not a manual process. If it takes your team a day to onboard a new customer, your architecture is wrong for multi-tenancy.

Offboarding is equally important and often neglected. When a tenant leaves, you need to cleanly remove their data, revoke their access, reclaim their resources, and ensure no traces of their data remain in caches, logs, or backups.

Real-World Multi-Tenancy: A Case Study

Let me walk through a real architecture decision I made for a B2B SaaS platform serving approximately 2,000 tenants.

Application tier: Shared Kubernetes cluster. All tenants hit the same API pods. Tenant identification through JWT tokens. Rate limiting per tenant at the API gateway.

Database tier: Tiered approach. Small tenants (< 1GB data) shared a PostgreSQL database with row-level security. Medium tenants (1-50GB) got their own schemas. Enterprise tenants (> 50GB or compliance requirements) got dedicated RDS instances.

Caching tier: Shared Redis cluster with key prefixing by tenant ID. TTLs and memory limits enforced per tenant.

Object storage: Shared S3 bucket with prefix-based organization (s3://bucket/tenant-id/…). Bucket policies prevented cross-tenant access at the S3 level.

Networking: Shared VPC for standard tenants. Dedicated VPCs with VPC peering for enterprise tenants requiring network isolation.

This hybrid approach let us serve 1,800 small tenants on shared infrastructure (efficient) while giving 200 enterprise tenants the isolation they required (compliant). Operational overhead was manageable because the shared tier required no per-tenant management, and the enterprise tier was automated through Infrastructure as Code.

The Bottom Line

Multi-tenancy is about navigating the tension between efficiency and isolation. There’s no universally right answer. The right architecture depends on your tenants, your compliance requirements, your team’s capacity, and your economics.

What I can tell you from building multi-tenant systems for twenty years: start with the strongest isolation you can afford operationally, and weaken it only when you have data showing it’s necessary for efficiency. It’s much easier to relax isolation constraints than to tighten them after a data leak.

Build tenant-awareness into every layer from day one. Retrofitting multi-tenancy into a single-tenant system is one of the most painful architectural migrations I’ve ever been part of. And I’ve been part of several. Save yourself the pain and design for it upfront.

Get Cloud Architecture Insights

Practical deep dives on infrastructure, security, and scaling. No spam, no fluff.

By subscribing, you agree to receive emails. Unsubscribe anytime.