In 2013, a Google engineer named Jim Roskind prototyped a new transport protocol inside Chrome. The goal was to make the web faster without waiting for the IETF to standardize anything. They called it QUIC, and they shipped it to Chrome users before most of the industry had heard of it. By 2017, Google was using QUIC for about half of all its traffic. By 2021, the IETF had standardized the protocol. Today, over 30% of web traffic uses HTTP/3, which is the HTTP version that runs on QUIC.

This is not a small iterative improvement. QUIC is a complete rethinking of how transport-layer networking works for the web. It throws out TCP, moves TLS from a separate layer into the protocol itself, and solves a fundamental performance problem called head-of-line blocking that has plagued HTTP since the beginning. Understanding QUIC makes you a better infrastructure engineer because it changes how you think about latency, connection management, and what you can and cannot fix at the CDN or proxy layer.

The Problem QUIC Was Built to Solve

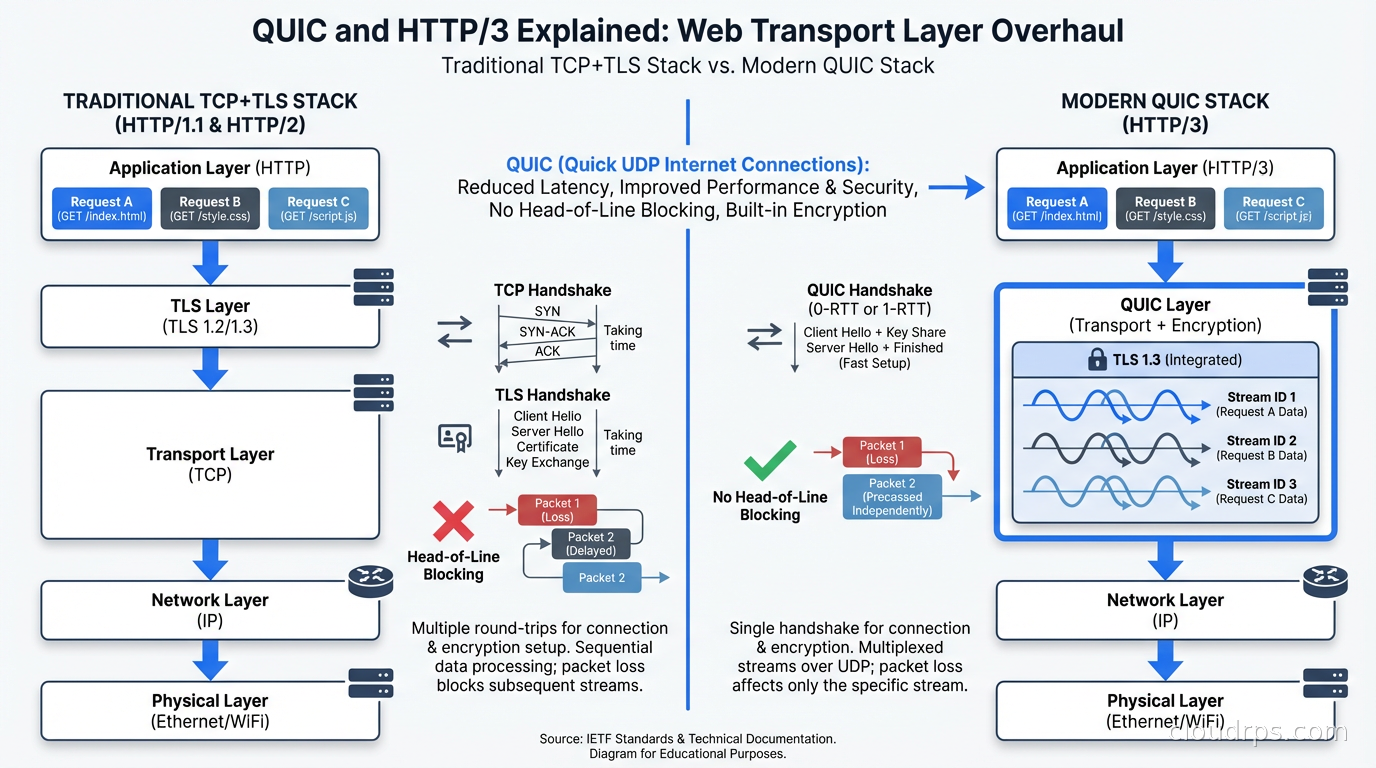

To understand QUIC, you need to understand what is wrong with TCP in the context of modern web applications. The core issue is head-of-line blocking, and it is more insidious than most people realize.

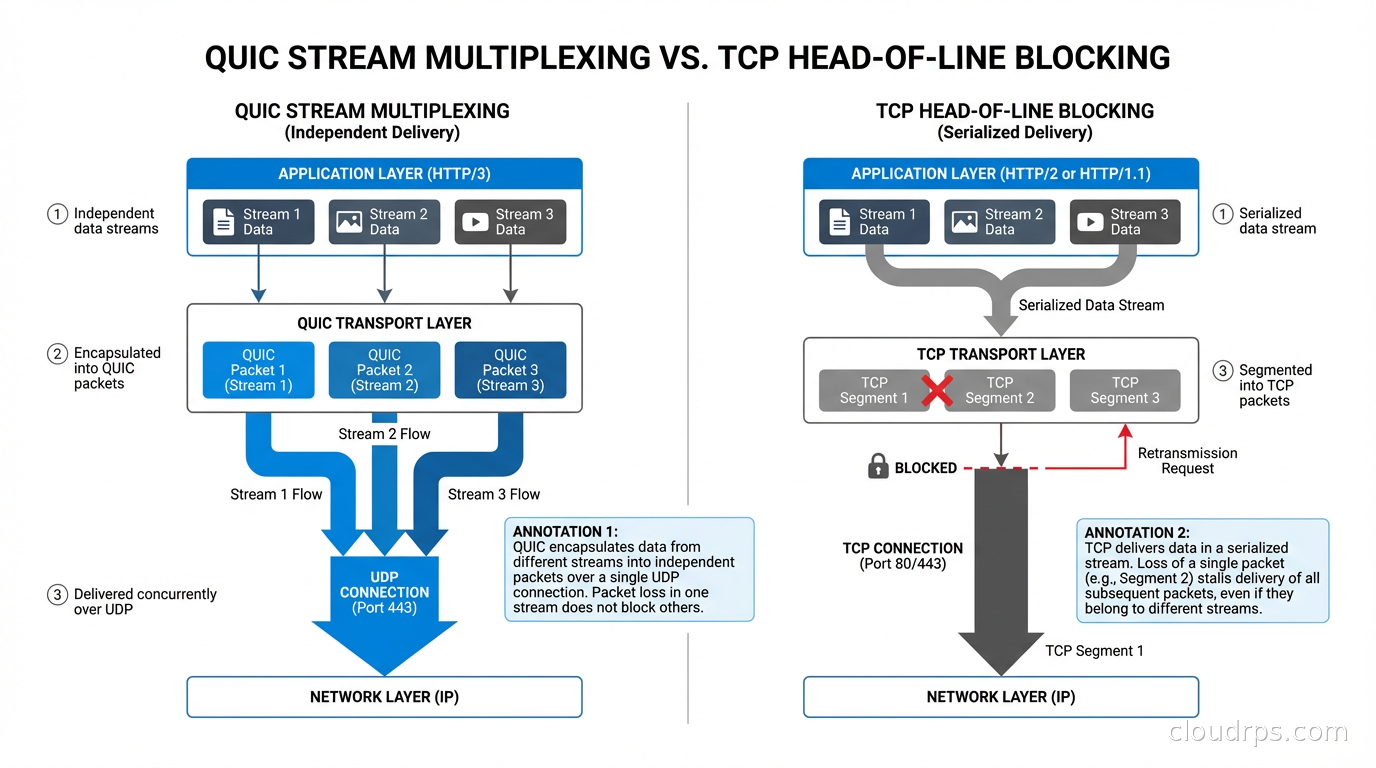

HTTP/2 was supposed to fix the performance problems of HTTP/1.1. HTTP/1.1 required a separate TCP connection for each concurrent request, leading to the practice of domain sharding and connection pooling. HTTP/2 introduced multiplexing: multiple request-response pairs sharing a single TCP connection, interleaved as frames. This eliminated the connection overhead and made better use of bandwidth.

But HTTP/2 multiplexing has a hidden flaw. When you are multiplexing multiple streams over a single TCP connection, TCP treats the entire connection as one ordered byte stream. TCP guarantees in-order delivery. If a packet gets lost, TCP stops delivering data to the application until the lost packet is retransmitted and received. All streams stall, even the ones that have nothing to do with the lost packet.

This is TCP head-of-line blocking. On a reliable network, it is invisible. On a lossy network, which describes most mobile connections and a significant fraction of wifi connections, it means that losing one packet degrades all concurrent requests. The application is blocked at the TCP layer waiting for retransmission of data it may not even need right now.

The fix requires moving out of TCP. QUIC runs over UDP, which provides no reliability guarantees at all. QUIC then implements its own reliability mechanism at the stream level, not the connection level. Each stream’s ordering is independent. A lost packet stalls only the stream that was carrying that data. Other streams continue unaffected.

This is not a subtle difference. On connections with 2% packet loss (not uncommon on mobile), HTTP/2 over TLS over TCP can be significantly slower than HTTP/1.1 because the head-of-line blocking hurts multiplexing more than it helps. QUIC eliminates this pathology entirely.

The Architecture of a QUIC Connection

QUIC is not just UDP with reliability bolted on. The protocol redesigns the transport layer from scratch with several innovations that matter for performance.

Integrated TLS 1.3: In traditional HTTPS, you have a TCP three-way handshake (1.5 round trips), then a TLS 1.3 handshake (1 round trip), and then you can send your first HTTP request. That is 2.5 round trips before you see any application data. QUIC collapses the transport and TLS handshakes together. A new QUIC connection requires 1 round trip before you can send data. QUIC makes TLS non-optional: encryption is built into the protocol. There is no unencrypted QUIC.

The relationship between QUIC and TLS is worth understanding clearly. QUIC does not run TLS on top of it. Instead, QUIC uses TLS 1.3’s key exchange mechanism to derive the keys that QUIC uses internally for encryption. The TLS record layer is replaced by QUIC’s own framing. This is a tighter integration than the layered TCP+TLS model, which is part of why ossification was a concern during QUIC’s design.

0-RTT resumption: For connections to servers you have connected to before, QUIC supports 0-RTT resumption. The client sends application data with the first packet, before receiving any acknowledgment from the server. The server processes the data immediately. This reduces perceived latency to zero for repeat connections to the same server.

0-RTT has a security trade-off: the data is not protected against replay attacks. An attacker who intercepts the first packet can replay it to the server. This is why 0-RTT is appropriate for idempotent requests (GET requests for web content) but should not be used for state-changing operations. Most implementations handle this correctly by default.

Connection migration: TCP connections are identified by the four-tuple of source IP, source port, destination IP, destination port. When you switch from wifi to cellular on your phone, your source IP changes, and the TCP connection breaks. You have to reconnect, redo the TLS handshake, and restart any in-flight requests.

QUIC connections are identified by a connection ID, a value chosen by the endpoints that is independent of the network path. When you move from wifi to cellular, QUIC can migrate the connection to the new network path without breaking it. Ongoing downloads, streaming video, and API calls continue uninterrupted. This is called connection migration, and it is one of the features that makes QUIC compelling for mobile applications.

Improved congestion control extensibility: TCP’s congestion control algorithm is implemented in the kernel. Changing it requires kernel updates, which are slow. QUIC implements congestion control in userspace. Google has been running BBR (Bottleneck Bandwidth and Round-trip propagation time) as QUIC’s default congestion algorithm in many deployments. The ability to iterate on congestion control without kernel changes accelerates experimentation. Our discussion of latency vs bandwidth tradeoffs provides the foundational concepts that congestion control algorithms try to optimize.

HTTP/3: HTTP Semantics over QUIC

HTTP/3 is the mapping of HTTP semantics onto QUIC streams. If you understand HTTP/2, most of HTTP/3 will feel familiar: there are request streams, response streams, headers compressed with a scheme called QPACK (the QUIC equivalent of HPACK), and the same semantics for methods, status codes, and headers.

The main structural difference is that HTTP/3 uses QUIC streams instead of HTTP/2 frames over TCP. Each request-response pair gets its own QUIC stream. QUIC streams are independent, so the head-of-line blocking problem from HTTP/2 is gone.

QPACK is worth a brief note. HTTP/2 uses HPACK for header compression, which maintains a shared compression table between client and server. This is stateful: the compression state for request 5 depends on the headers that were sent in requests 1 through 4. In HTTP/2, this works because the ordering of frames on a TCP connection is guaranteed. In HTTP/3 over QUIC, headers from different streams can arrive out of order. QPACK solves this by separating the encoder stream from the data streams, allowing dynamic table updates to be applied out of band.

The Ossification Problem: Why QUIC Had to Run Over UDP

When the QUIC design team was working through protocol options, they ran into a fundamental problem: TCP middleboxes.

The internet is full of devices that inspect, modify, or filter TCP traffic: firewalls, NAT devices, load balancers, WAN accelerators, deep packet inspection systems. Over decades, these devices have accumulated assumptions about TCP’s wire format. Many of them would reject or corrupt any TCP extension that changed the header format in unexpected ways.

This is called protocol ossification: the protocol has been so thoroughly inspected and processed by intermediaries that it can no longer evolve. Attempts to deploy TCP Fast Open (a TCP extension that allows sending data in the SYN packet) failed widely because middleboxes dropped SYN packets with data. TCP’s extensibility has been effectively frozen by the installed base of middleboxes.

QUIC avoids this by running over UDP, which middleboxes largely leave alone, and by encrypting almost all of its headers. Middleboxes cannot inspect or modify what they cannot read. This was a deliberate design choice: making the protocol opaque to intermediaries is the only way to preserve the ability to evolve it.

The tradeoff is that QUIC looks like UDP traffic, and some corporate firewalls block UDP on port 443 or rate-limit it. Implementations handle this with fallback: if QUIC is blocked, fall back to HTTP/2 over TLS over TCP. In practice, this fallback works seamlessly, but it means QUIC’s benefits are not available everywhere.

What This Means for Your Infrastructure

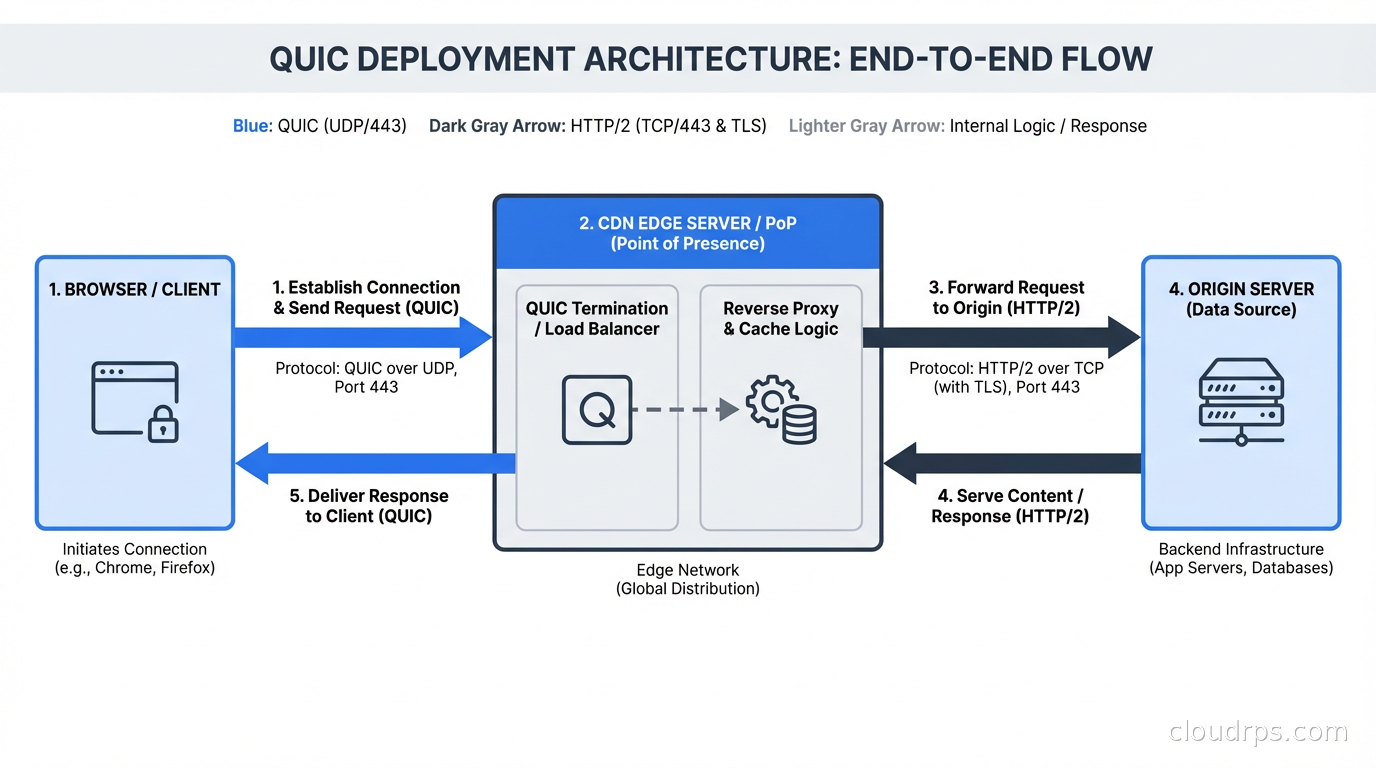

If you are running a CDN or reverse proxy in front of your application, QUIC adoption is primarily a question of whether your edge layer supports it. Cloudflare, Fastly, and AWS CloudFront all support HTTP/3 and handle the QUIC complexity for you. Your origin servers continue speaking HTTP/1.1 or HTTP/2 over TCP to the CDN. Your users get QUIC between their browser and the CDN edge. This is the low-friction path to HTTP/3 for most applications.

If you are running your own load balancers or proxies, nginx has experimental HTTP/3 support and LiteSpeed has had it for longer. HAProxy added QUIC support in version 2.6. The ecosystem has matured, but you are still earlier on the operational maturity curve than you are with HTTP/2.

For gRPC users, gRPC-over-QUIC is an area of active development. gRPC currently runs on HTTP/2. The benefits of QUIC’s stream-level independence are particularly valuable for gRPC’s multiplexed RPC calls. When a gRPC application sends 10 RPCs over one HTTP/2 connection and the network drops a packet, all 10 RPCs stall. With QUIC, only the affected stream stalls. This connects to the gRPC and Protocol Buffers architecture where high-frequency multiplexed calls would benefit most.

Server-side QUIC deployment also requires UDP socket performance at scale. TCP has decades of kernel optimizations: receive offloading, zero-copy, efficient syscall batching. UDP at high packet rates is more expensive in CPU terms for the same throughput. This gap has been narrowing as kernel support for QUIC-style UDP workloads has improved (io_uring, UDP GSO/GRO), but it is a real operational consideration for high-traffic applications.

Performance Reality: When Does QUIC Actually Help?

The marketing around QUIC sometimes implies universal performance improvements. The reality is more nuanced.

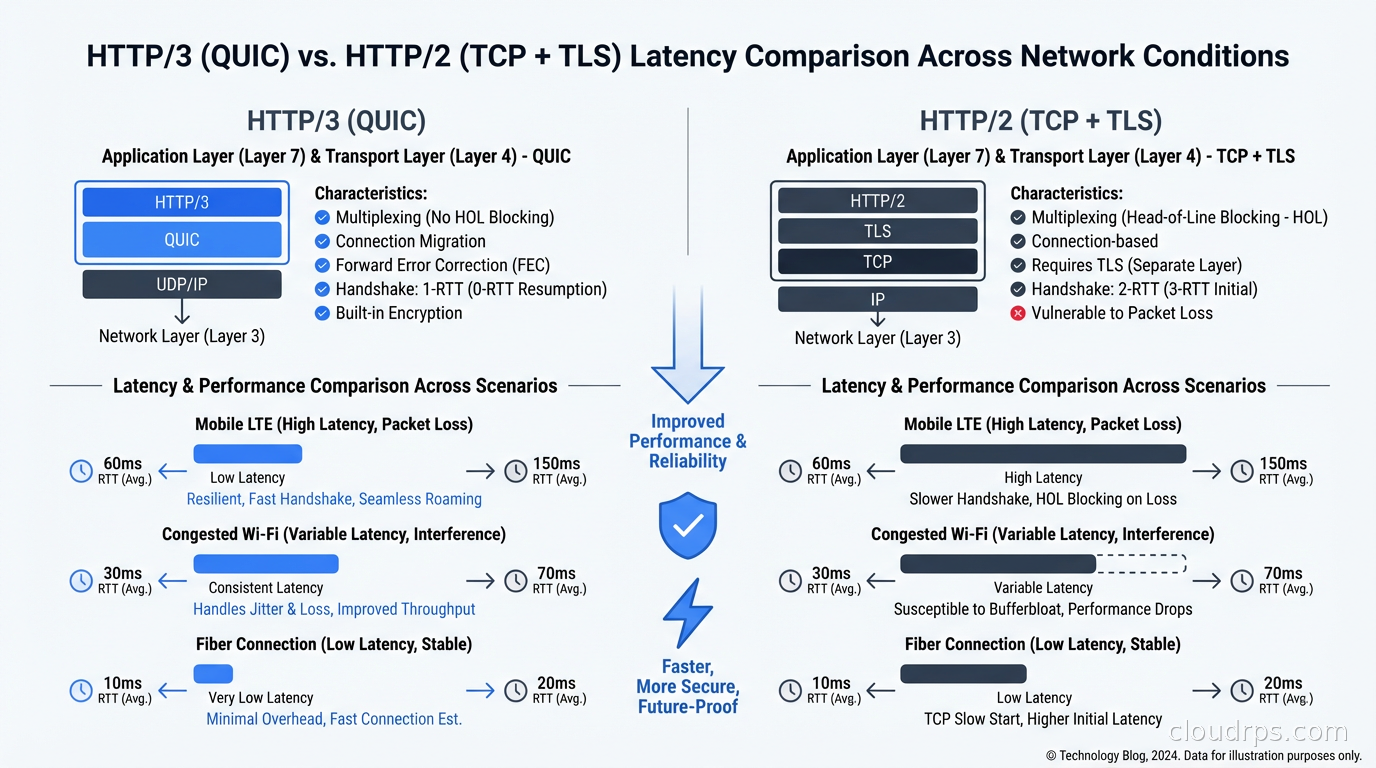

QUIC provides the largest improvements in three scenarios:

High-latency or lossy networks: Mobile connections, satellite links, congested wifi. The 0-RTT resumption and elimination of head-of-line blocking compound here. If you look at Google’s published data, QUIC improves video buffering rate on YouTube by meaningful percentages on mobile networks. On a stable fiber connection, the improvement may be negligible.

Applications with many short-lived connections: 0-RTT saves one round trip per new connection. If your application opens and closes many connections (microservices, mobile apps that background frequently), this adds up. For a long-lived WebSocket connection, the handshake savings are a one-time benefit.

High-concurrency multiplexed requests: Single-page applications that fire 20+ requests on load over a single HTTP/2 connection benefit from QUIC’s stream independence. If you measure performance on mobile with network throttling enabled, HTTP/3 consistently beats HTTP/2 in this scenario.

Where QUIC provides less benefit: server-to-server communication on reliable datacenter networks, long-lived streaming connections where the connection setup cost is amortized, and applications that are CPU-bound rather than network-bound.

For your DNS infrastructure, the same protocol evolution is happening. DNS over HTTPS (DoH) and DNS over QUIC (DoQ) are the encrypted replacements for plaintext UDP/TCP DNS. Your DNS resolution and DNS record types knowledge transfers directly; the transport underneath is changing.

Operational Considerations

A few things that come up when you start running QUIC in production.

Firewall rules: UDP 443 needs to be open. This sounds obvious, but many organization firewall policies were written when the assumption was that HTTPS meant TCP 443. Verify that your CDN and origin security groups allow inbound and outbound UDP on 443.

Load balancer stickiness: QUIC connection IDs are chosen by the client. If you are load-balancing QUIC traffic across multiple servers, you need to route packets from the same connection to the same server. This is harder than TCP because UDP is connectionless from the network’s perspective. Cloud load balancers handle this. If you are rolling your own, you need to implement consistent routing based on the QUIC connection ID, which is extracted from the cleartext portion of the QUIC header.

Observability: Traditional network monitoring tools that work by inspecting TCP headers will not be able to inspect QUIC traffic, since most QUIC headers are encrypted. You need application-level instrumentation to get connection metrics. This is a gap in many monitoring stacks. The eBPF-based observability approaches are one of the few ways to get kernel-level visibility into QUIC connections without decrypting them.

MTU and packet sizing: QUIC uses UDP, and UDP packets that exceed the path MTU will be fragmented or dropped. QUIC implements path MTU discovery, but misconfigured networks that block ICMP can cause problems. This is a known issue in environments with strict ICMP filtering.

The Trajectory

QUIC and HTTP/3 are not experimental anymore. They are production protocols running at massive scale at Google, Cloudflare, Facebook (which contributed heavily to the open-source implementation), and Akamai. Browser support is universal: Chrome, Firefox, Safari, and Edge all support HTTP/3.

The next frontier is QUIC in contexts beyond web browsing. DNS over QUIC, WebTransport (which builds a general-purpose transport API on top of QUIC for real-time web applications), and QUIC for IoT are all active development areas. WebTransport in particular is interesting because it gives web applications access to QUIC’s stream model directly, enabling patterns that were previously only possible with WebSocket or custom TCP protocols.

For your CDN architecture and your user-facing services, supporting HTTP/3 today is low-risk and high-reward. Turn it on at the CDN layer, measure the impact on your mobile user metrics, and let the protocol do its work. The networking details covered here are the foundation that explains why your users’ experience improves, even when it is invisible from the application code.

Get Cloud Architecture Insights

Practical deep dives on infrastructure, security, and scaling. No spam, no fluff.

By subscribing, you agree to receive emails. Unsubscribe anytime.