I’ve been building and securing cloud infrastructure for over a decade, and I can tell you the threat model has completely shifted. When I started, security meant hardening your perimeter, patching servers, and keeping your SSH keys safe. Today, the most dangerous attacks don’t hit your running systems at all. They hit your build pipeline and ride trusted software right past every control you’ve spent years building.

SolarWinds changed everything. Not because it was technically sophisticated (it wasn’t), but because it demonstrated that attackers with patience could compromise tens of thousands of organizations through a single trusted software update. If you trusted SolarWinds Orion, you were compromised. Doesn’t matter how hardened your prod environment was.

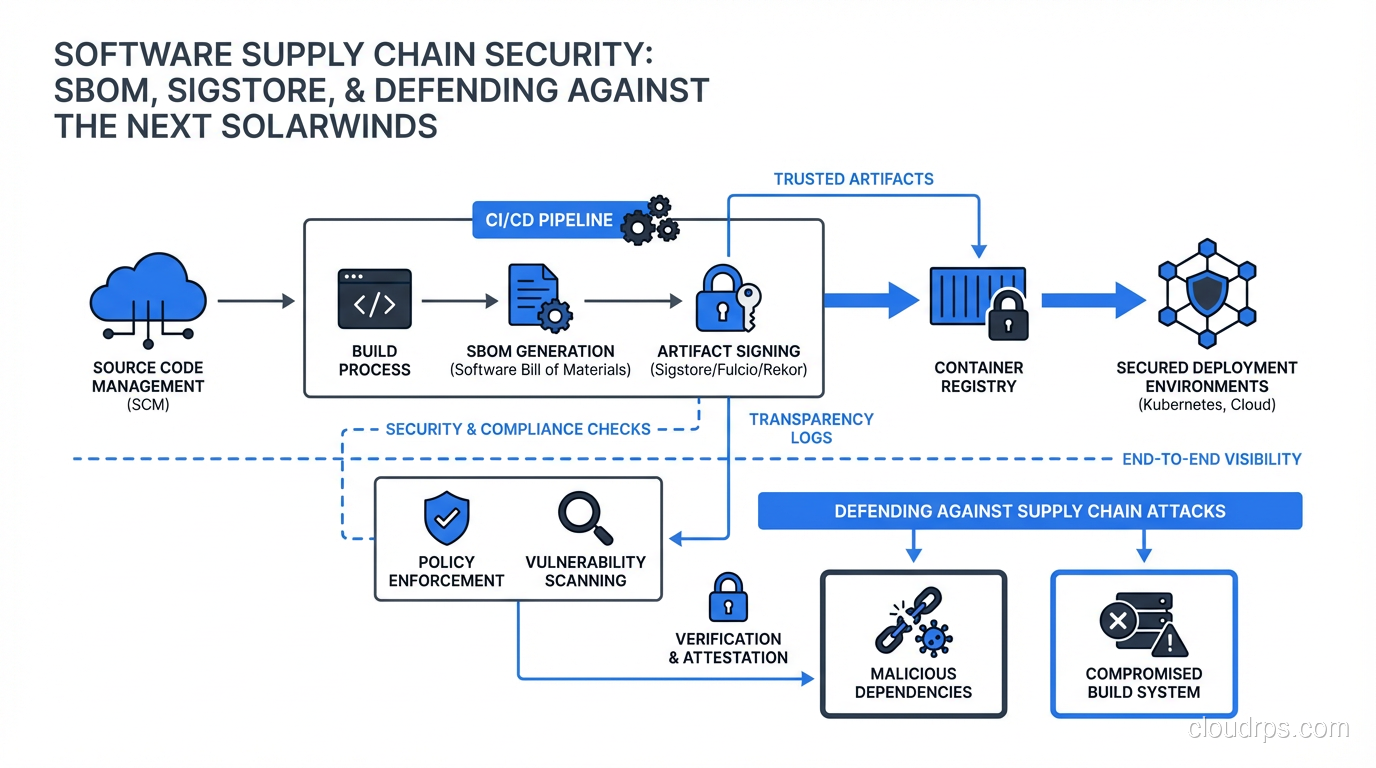

Supply chain security is now a first-class concern for any team running production workloads. Let me walk you through the practical reality: what the threats look like, how SBOMs work, and how to actually implement artifact signing without driving your developers insane.

What “Software Supply Chain” Actually Means

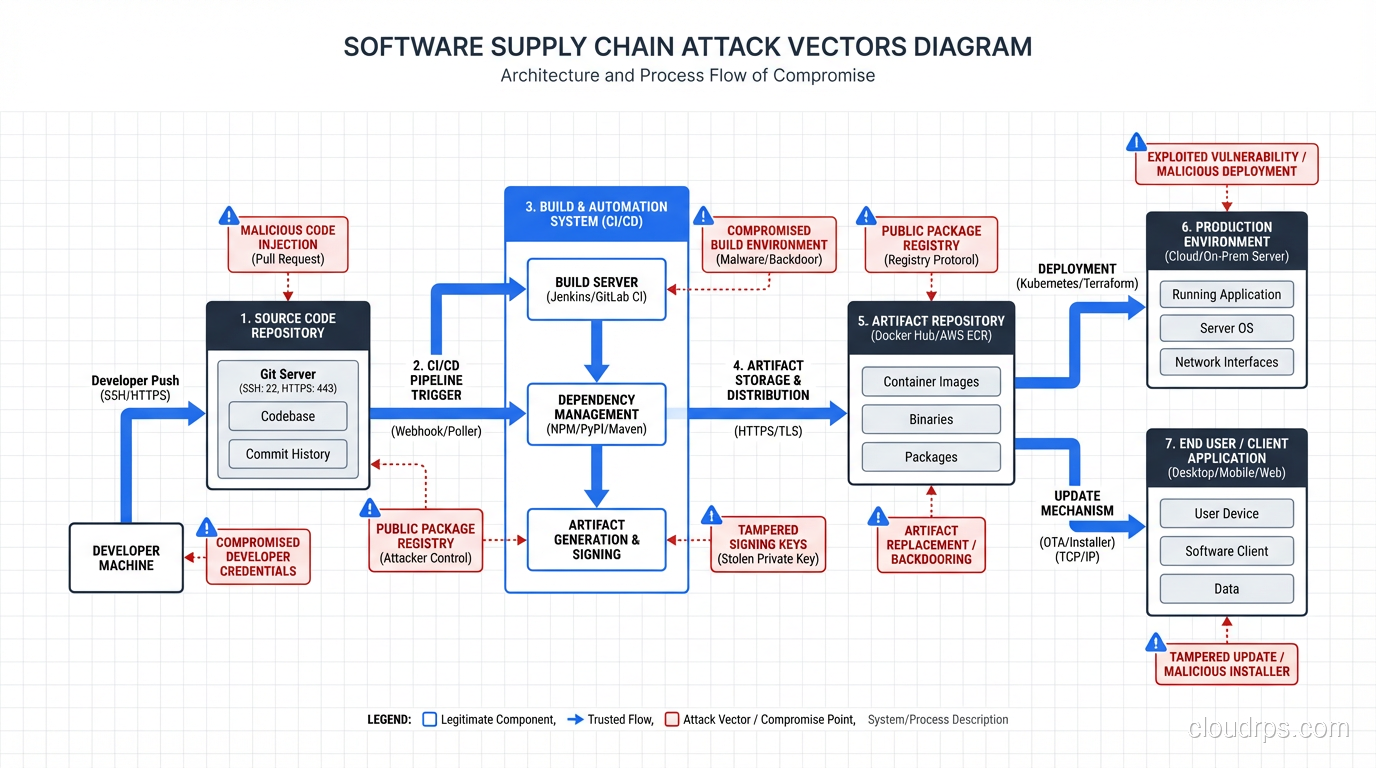

The software supply chain is everything that goes into your running application: the open source libraries, the build tools, the CI/CD system, the container base images, the package registries, and the third-party SDKs. Every link in that chain is a potential injection point.

When I audit production systems, I find the same pattern repeatedly: engineers obsess over their application code (rightfully) but treat dependencies as trusted black boxes. Your application might have 200 lines of hand-reviewed code and 50,000 lines of transitive dependencies nobody has read. That ratio is where attackers live.

The categories of supply chain attacks I’ve seen in the wild:

Dependency confusion attacks: An attacker publishes a malicious package to a public registry (npm, PyPI, RubyGems) with the same name as an internal private package but a higher version number. Build systems that check public registries before private ones will pull the malicious package instead. This is devastatingly simple and has worked against Microsoft, Apple, and dozens of others.

Typosquatting: reqeusts instead of requests. colurama instead of colorama. Attackers register packages with names one keystroke away from popular libraries and wait for fat-fingered developers.

Compromised maintainer accounts: Take over a popular package’s npm account through phishing or credential stuffing, push a malicious version, and watch it flow into thousands of applications overnight. This is what happened with the event-stream package, which had 2 million weekly downloads when it was compromised.

Malicious build tooling: Compromise the tools that compile your code. If your compiler or build system is malicious, it can inject backdoors into every binary it produces, regardless of how clean your source code is.

Container image tampering: Someone gains write access to a container registry and modifies an existing image. If you’re pulling by tag rather than digest, your next deployment picks up the modified image.

The common thread in all of these: your existing runtime security controls don’t help. The malicious code arrives as legitimate, signed (sometimes), trusted software.

What is a Software Bill of Materials (SBOM)?

An SBOM is a machine-readable inventory of every component in a piece of software: the libraries it uses, the versions, the licenses, the known vulnerabilities, and the provenance of each piece.

Think of it like a nutrition label for software. Instead of “calories, fat, sodium,” you get “lodash 4.17.21, OpenSSL 3.0.7, log4j 2.17.1.” Critically, it includes transitive dependencies, not just direct ones. The stuff two or three levels deep that nobody thinks about until there’s a Log4Shell.

There are two main SBOM formats:

SPDX (Software Package Data Exchange): Developed under the Linux Foundation. JSON or YAML format. This is the format the US government mandates under Executive Order 14028 and the NTIA guidance. Verbose but comprehensive.

CycloneDX: Developed by OWASP. Generally considered more actionable for security use cases because it has better support for vulnerability correlation. Most commercial tooling generates CycloneDX.

Here’s what a minimal CycloneDX SBOM looks like for a container image:

{

"bomFormat": "CycloneDX",

"specVersion": "1.5",

"metadata": {

"timestamp": "2025-04-04T09:00:00Z",

"component": {

"type": "container",

"name": "my-api",

"version": "1.2.3",

"purl": "pkg:oci/my-api@sha256:abc123..."

}

},

"components": [

{

"type": "library",

"name": "openssl",

"version": "3.0.7",

"purl": "pkg:deb/debian/openssl@3.0.7",

"licenses": [{"license": {"id": "Apache-2.0"}}]

}

],

"vulnerabilities": [

{

"id": "CVE-2023-0286",

"affects": [{"ref": "openssl"}],

"ratings": [{"severity": "high"}]

}

]

}

The key field is the purl (Package URL), which provides a standardized, unambiguous identifier for any package across any ecosystem. You can take a purl, query it against a vulnerability database (NVD, OSV, GitHub Advisory), and know exactly what CVEs affect it.

Generating SBOMs used to be painful. Today there are good tools: Syft from Anchore will generate an SBOM from a container image, directory, or archive in under a second. Trivy will generate and scan in one shot. Both are free and integrate into any CI pipeline.

# Generate SBOM for a container image

syft my-api:1.2.3 -o cyclonedx-json > sbom.json

# Scan the SBOM against vulnerability databases

grype sbom:./sbom.json

# Or do both at once with Trivy

trivy image --format cyclonedx --output sbom.json my-api:1.2.3

trivy sbom sbom.json

Having an SBOM is table stakes. The real value is continuous scanning: when a new CVE drops, you can immediately know which of your deployed applications are affected instead of spending three days grepping through dependency files.

Artifact Signing with Sigstore

Knowing what’s in your software is only half the problem. The other half is knowing that what you received is actually what was built and hasn’t been tampered with.

This is what artifact signing solves, and this is where Sigstore is changing the game.

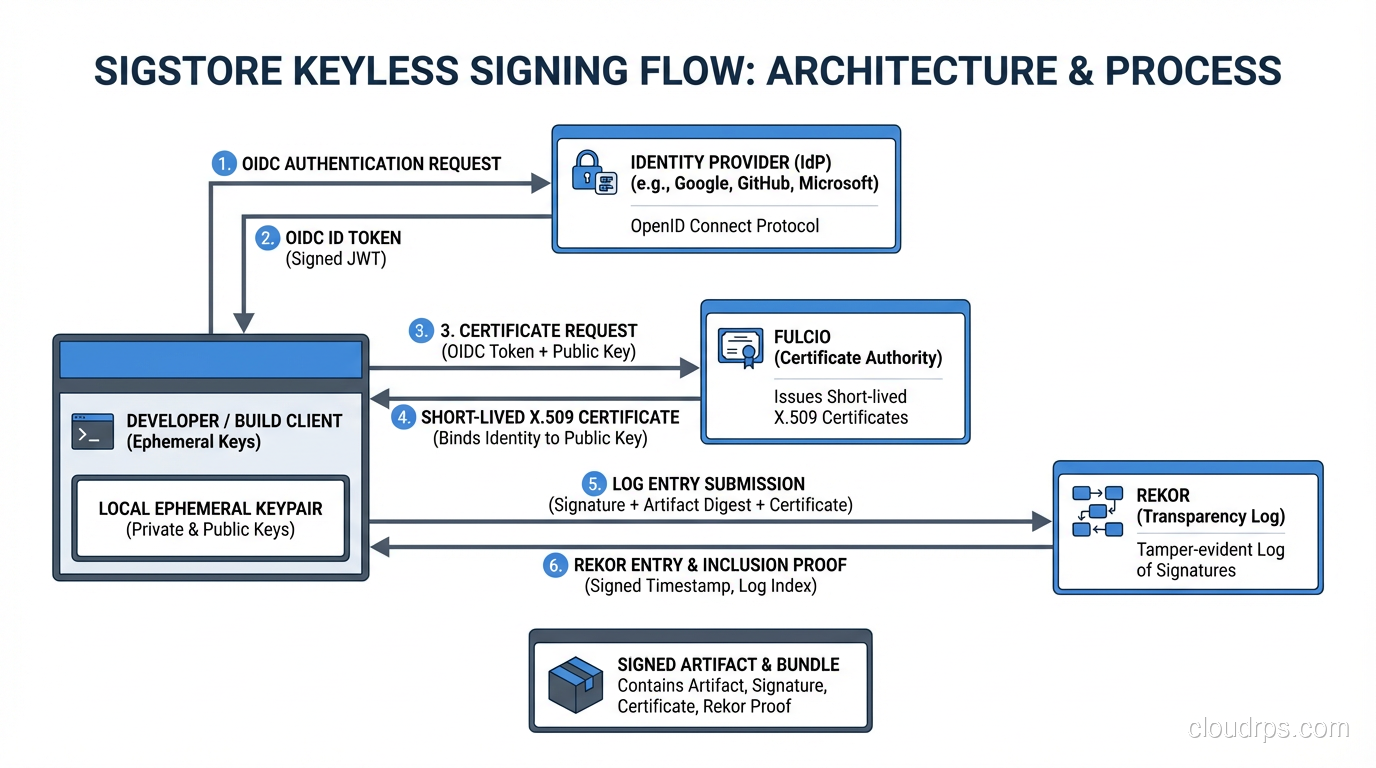

Traditional code signing is a mess. It requires managing private keys, storing them securely (HSM or bust), distributing them to signing machines, and rotating them when they’re compromised. Most teams either don’t do it at all or do it badly, leaving their private key sitting in a CI environment variable somewhere.

Sigstore takes a different approach called keyless signing. Instead of managing long-lived private keys, you use your existing identity (GitHub Actions OIDC token, Google Workload Identity, etc.) to get a short-lived signing certificate from a public Certificate Transparency log called Rekor. The certificate is valid for 10 minutes, long enough to sign your artifact, short enough that compromise of the signing ephemeral key is nearly useless.

The components:

Cosign: The tool you actually use to sign and verify container images, files, and SBOMs.

Rekor: The public, append-only transparency log. Every signature is recorded here. Anyone can verify that a signature was created, and nobody can delete signatures without it being visible.

Fulcio: The certificate authority that issues the short-lived signing certificates, backed by OIDC identity.

Here’s what signing looks like in a GitHub Actions workflow:

- name: Sign container image

uses: sigstore/cosign-installer@main

- name: Sign the Docker image

env:

COSIGN_EXPERIMENTAL: 1

run: |

cosign sign --yes \

--oidc-issuer https://token.actions.githubusercontent.com \

${{ env.REGISTRY }}/${{ env.IMAGE_NAME }}@${{ steps.build.outputs.digest }}

That’s it. No key management, no secrets to rotate, no HSM required. The identity is your GitHub Actions run itself, tied to the specific workflow, repository, and commit SHA.

Verification is equally simple:

cosign verify \

--certificate-identity-regexp "https://github.com/myorg/myrepo/.*" \

--certificate-oidc-issuer "https://token.actions.githubusercontent.com" \

my-registry/my-image@sha256:abc123...

This tells you: the image was signed by a GitHub Actions workflow in the myorg/myrepo repository. If someone tampers with the image and changes the digest, the signature doesn’t match. If someone tries to sign with a fake identity, Rekor’s transparency log shows the discrepancy.

I rolled this out at a previous company and the pushback was immediate: “This is overkill, we’re not a high-value target.” Six months later, one of our base images had a critical CVE. Because we had Cosign in our admission controller (more on that below), we could prove definitively which workloads were using the affected base image and which had been rebuilt with the patched version. The signing infrastructure paid for itself in that one incident response.

Policy Enforcement: Admission Controllers and OPA

Signing and generating SBOMs is useless if you don’t enforce policies at deployment time. This is where Kubernetes admission controllers come in.

Kyverno and OPA Gatekeeper are the two main options for policy enforcement in Kubernetes. Kyverno has simpler policy syntax. Gatekeeper is more powerful for complex policies. I’ve used both; for supply chain policies specifically, Kyverno is usually the better starting point.

A basic Kyverno policy to require signed images:

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: require-signed-images

spec:

validationFailureAction: Enforce

rules:

- name: check-image-signature

match:

any:

- resources:

kinds:

- Pod

verifyImages:

- imageReferences:

- "my-registry.io/myorg/*"

attestors:

- count: 1

entries:

- keyless:

subject: "https://github.com/myorg/*/github/workflows/*.yml@refs/heads/main"

issuer: "https://token.actions.githubusercontent.com"

rekor:

url: https://rekor.sigstore.dev

This policy blocks any Pod from deploying with an unsigned image from your registry. The subject pattern restricts which GitHub Actions workflows can produce valid signatures, so even if someone manages to push an unsigned image, it won’t deploy.

You can layer additional policies on top: require SBOMs to be attached to images as attestations, require vulnerability scans to have been run within the last 24 hours, require that no critical CVEs are present.

The operational reality is that you need to be thoughtful about the rollout order. I always recommend starting in Audit mode rather than Enforce, which logs violations without blocking deployments. Let it run for two weeks to catch everything that would have been blocked, fix those cases, then flip to Enforce. Jumping straight to enforce in production at 2am because you wanted to show momentum is how you cause a production incident.

Integrating supply chain security ties directly into your existing secret management practices and extends naturally from your zero trust security model: just as you don’t trust network location, you shouldn’t trust software provenance claims without verification.

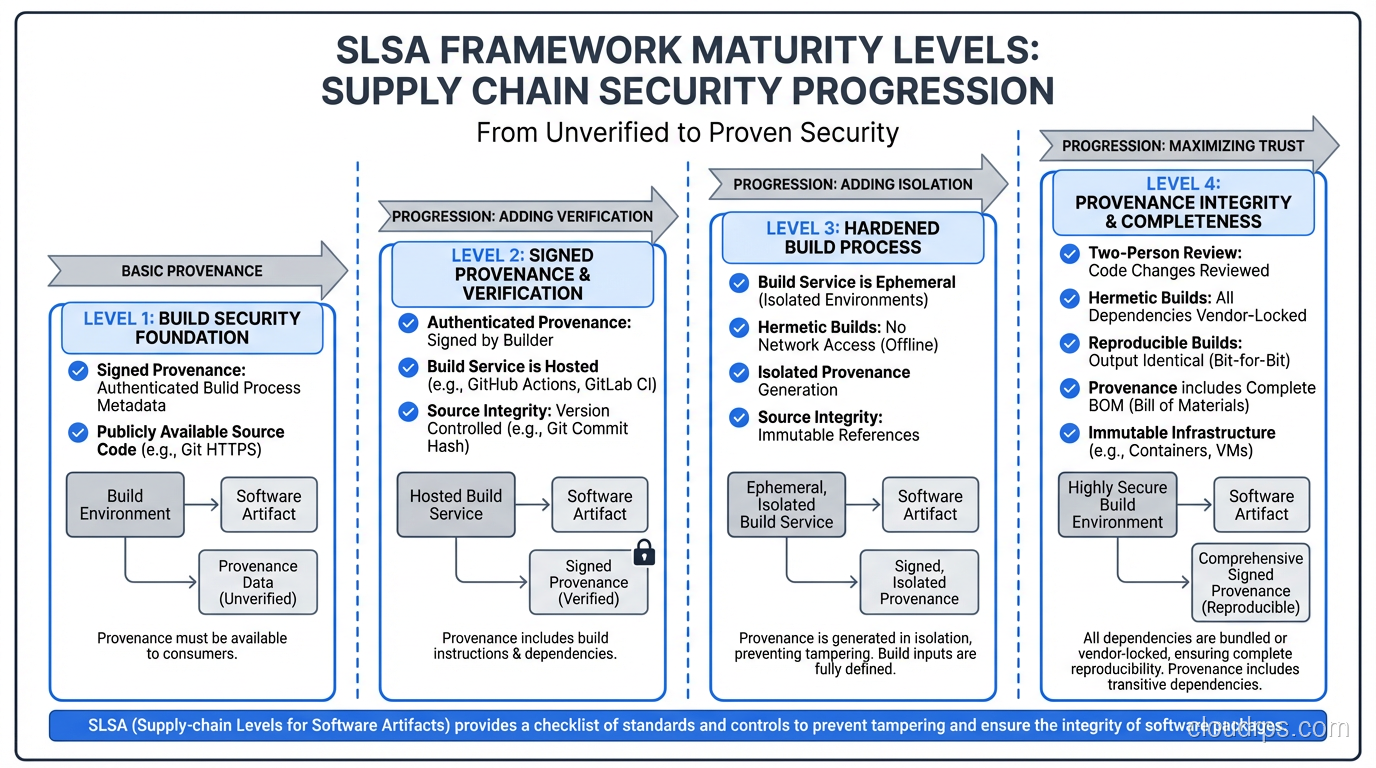

The SLSA Framework

SLSA (Supply-chain Levels for Software Artifacts, pronounced “salsa”) is a security framework from Google that defines concrete, incremental levels of supply chain security maturity.

The four levels:

SLSA 1: Build process is scripted/automated, basic provenance is available.

SLSA 2: Version control used, hosted build service that generates signed provenance.

SLSA 3: Source and build platforms meet specific security standards. The build process is isolated; it cannot be influenced by previous builds or the build service itself.

SLSA 4 (the gold standard): Two-person review of all source code changes, hermetic builds (no network access during build, reproducible), comprehensive provenance.

Most organizations start at SLSA 1 (they use CI/CD) and get to SLSA 2 fairly easily (use GitHub Actions OIDC to sign provenance). SLSA 3 requires more architectural investment: isolated build environments, ephemeral build workers, no persistent state between builds. SLSA 4 is currently achievable but impractical for most organizations’ cadences.

The framework matters because it gives you a vocabulary for conversations with leadership. “We’re at SLSA 2 today, working toward SLSA 3” is more meaningful than “we’re working on supply chain security.”

Dependency Management: The Ongoing Battle

The most unglamorous part of supply chain security is dependency management, and it’s where most organizations fail.

Lock files are non-negotiable. package-lock.json, Pipfile.lock, go.sum, Cargo.lock. These files pin exact versions including transitive dependencies. Without them, npm install on Monday might pull different packages than npm install on Thursday. Commit them. Review changes to them.

Renovate Bot or Dependabot: Automated dependency updates are no longer optional. The threat model has inverted: keeping old dependencies is now riskier than updating them. I’ve seen teams keep a dependency frozen for 18 months because “it works, don’t touch it” only to discover it had three critical CVEs that were disclosed two years earlier. Use Renovate Bot (my preference over Dependabot for its superior grouping and scheduling features) and merge security updates within 48 hours.

Private package mirrors: For critical systems, mirror the packages you use from public registries to your own internal registry. This protects against dependency confusion attacks and registry outages. Artifactory and Nexus both support this. The initial setup overhead is worth it for anything running in production.

Checksums and hash verification: When you pull a package, verify its checksum against what the registry published. Most modern package managers do this automatically, but for CI pipelines that download binaries directly (like my team’s Go toolchain installation), you need to handle this yourself.

# Verify binary hash after download

SHA256SUM="abc123..."

echo "${SHA256SUM} hugo_extended_0.128.0_linux-amd64.tar.gz" | sha256sum -c

The CI/CD pipeline is your first enforcement point and your highest-leverage investment. Every check you add to the pipeline runs for every commit, forever. The infrastructure as code practices that govern your build infrastructure itself also fall under the supply chain umbrella. If someone can modify your Terraform to point your ECR to a malicious registry, all the signing in the world doesn’t help.

Practical Implementation Roadmap

Here’s the sequence I recommend to teams starting this journey, ordered by impact-to-effort ratio:

Week 1: Enable SBOM generation in your CI pipeline. Syft or Trivy. Start scanning results. Don’t block deploys yet, just gather data.

Week 2-3: Triage the vulnerability scan results. You will find things. Don’t panic. Prioritize critical CVEs in actively exploited packages. Fix the obvious ones.

Week 4: Implement Sigstore/Cosign in CI. Sign all images built from main branch. This is low-effort with GitHub Actions.

Month 2: Deploy Kyverno in audit mode in production. Review audit logs. Start fixing unsigned images.

Month 3: Flip Kyverno to enforce for new services. Give existing services a 30-day grace period.

Month 4-6: Roll out enforce mode to all services. Implement SBOM attestation policies. Set up continuous monitoring with automated alerts for newly disclosed CVEs.

This isn’t a sprint, it’s a program. The payoff is knowing that when a new Log4Shell-level vulnerability drops, you can answer “are we affected?” in minutes instead of days.

The confidential computing capabilities now available in cloud providers add another layer here: for the most sensitive workloads, you can now verify not just what software is running but that the hardware environment itself is unmodified. Supply chain security from source code all the way through to the silicon.

Getting supply chain security right is one of the highest-leverage things a security team can do right now. The tooling has matured rapidly. The excuses for not doing it have expired.

Get Cloud Architecture Insights

Practical deep dives on infrastructure, security, and scaling. No spam, no fluff.

By subscribing, you agree to receive emails. Unsubscribe anytime.