I was skeptical about WebAssembly in cloud infrastructure for a long time. It felt like a technology looking for a use case outside the browser. Fast DOM manipulation in JavaScript? Sure. Replace containers in Kubernetes? That seemed like a stretch.

Then I read the original WASM design goals, spent time with the WASI specification, and actually ran some benchmarks. The skepticism shifted. WebAssembly has specific properties that make it genuinely interesting for cloud-native workloads, not as a replacement for containers but as a different tool for different jobs.

Let me give you the honest assessment: what WASM actually is at the infrastructure level, where it’s legitimately better than containers, where it’s not, and what the production story looks like today.

What WebAssembly Actually Is

WebAssembly is a binary instruction format and execution environment specification. It defines a virtual instruction set architecture (ISA) that’s designed to be:

Portable: WASM bytecode runs on any platform with a WASM runtime, regardless of underlying CPU architecture (x86, ARM, RISC-V). Compile once, run anywhere, genuinely.

Safe: WASM execution is sandboxed. The module can only access memory it’s explicitly given. It can’t access the filesystem, network, or any system resource unless the host runtime explicitly provides a handle. By default, WASM modules have zero capabilities.

Fast: WASM can be compiled to native machine code with an AOT (ahead-of-time) or JIT compiler. Performance on compute-intensive workloads is typically within 5-30% of native code.

Deterministic: WASM execution is predictable and deterministic by design, which matters for security analysis and reproducibility.

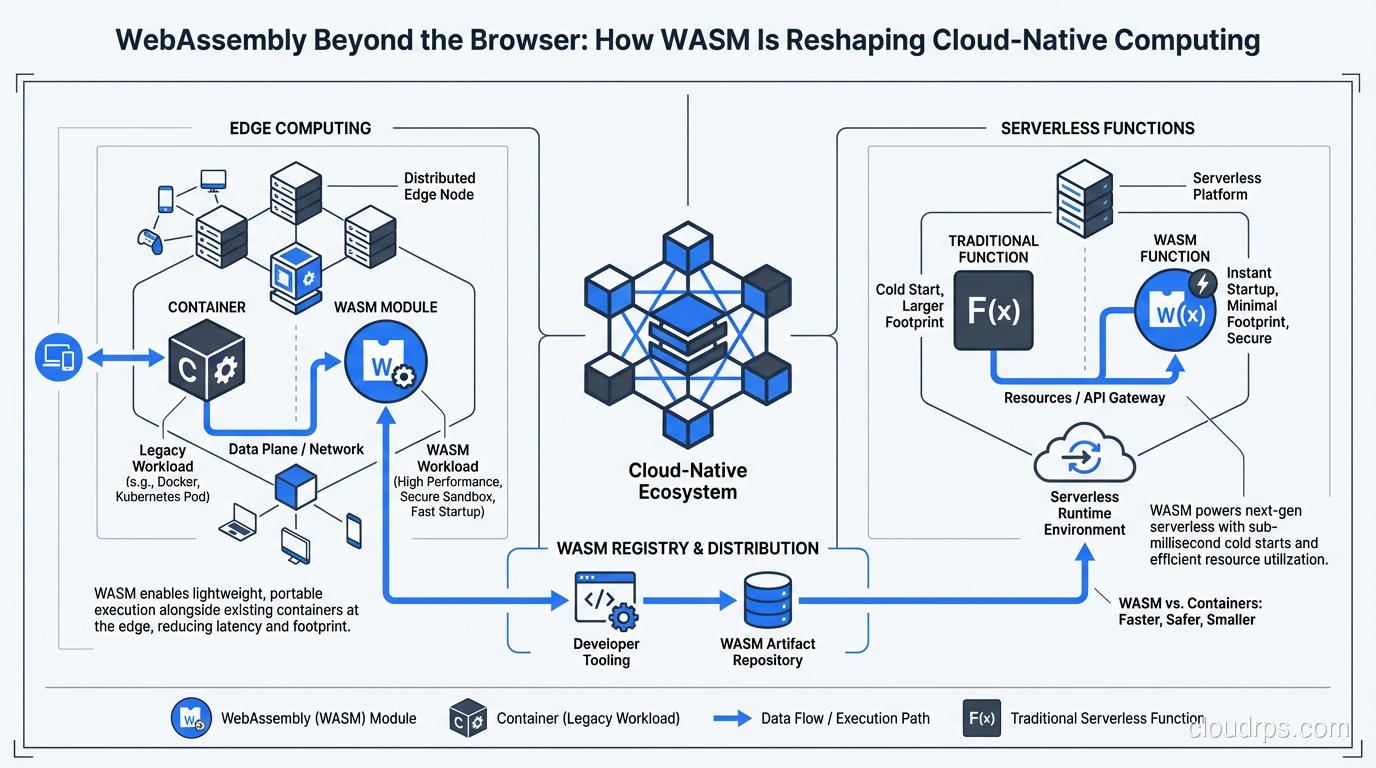

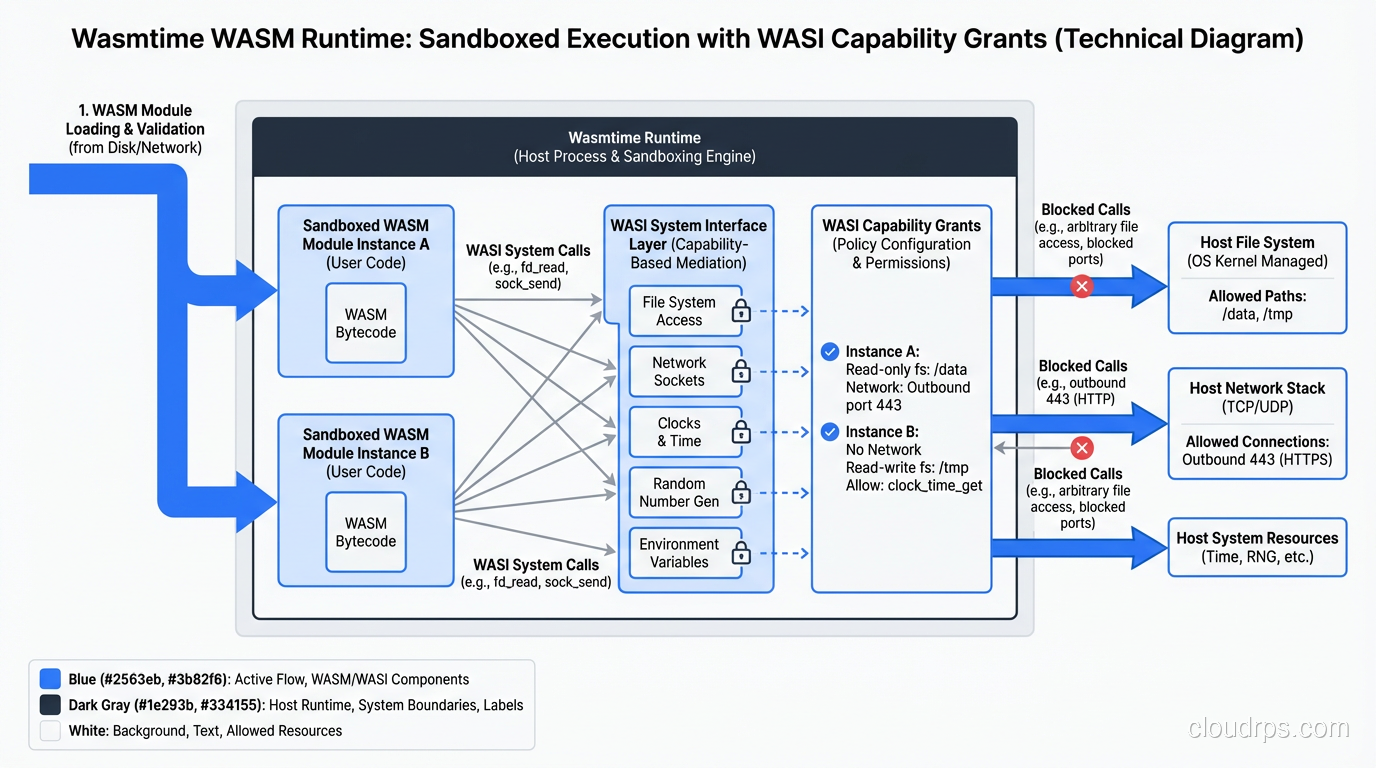

The sandboxing model is what sets WASM apart from containers. A Linux container is fundamentally a namespaced process on the host kernel. Container escapes are possible (though rare) because the container shares the host kernel. A WASM module executes in a completely isolated virtual environment. The module can’t make arbitrary system calls. It can only interact with the outside world through explicitly granted capabilities.

This is the WASI (WebAssembly System Interface) model: capabilities are granted explicitly and granularly. Instead of “this container has read access to /etc,” you grant “this WASM module has read access to this specific file descriptor.” The security model is closer to POSIX capabilities or zero trust principles than to traditional container isolation.

WASM Runtime Landscape

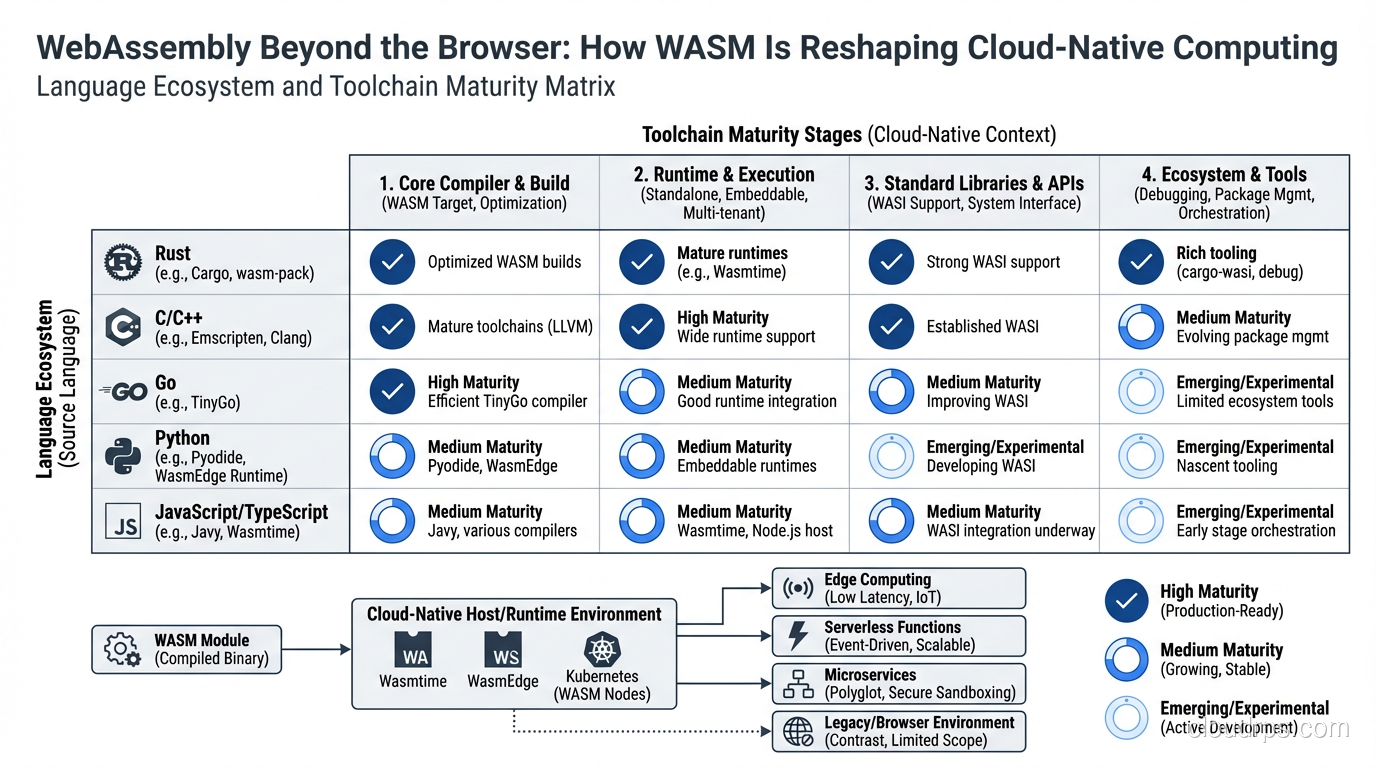

You need a runtime to execute WASM bytecode. In the browser, that’s the JavaScript engine (V8, SpiderMonkey). Outside the browser, the primary runtimes are:

Wasmtime: Developed by the Bytecode Alliance, Wasmtime is the reference implementation for WASI. Written in Rust. Fast compilation, good startup times. This is what most infrastructure tooling is built on.

WasmEdge: From the Cloud Native Computing Foundation. Designed specifically for cloud and edge workloads. Has extensions for networking, WASI-NN (neural network inference), and cloud-native use cases. Used by Docker+WASM integration and Kubernetes WASM node support.

Wasmer: Commercial runtime with a large ecosystem. Better Windows support than alternatives. Has a “Wasmer Cloud” platform for deploying WASM.

WAVM: Uses LLVM for compilation. Excellent peak performance but slower cold start than Wasmtime.

For cloud-native use cases, Wasmtime and WasmEdge are the dominant choices. If you’re deploying on Kubernetes with WASM node support, you’ll likely interact with WasmEdge through its containerd integration.

Where WASM Beats Containers: Cold Start Time

The most compelling cloud-native use case for WASM is serverless/edge computing with cold start sensitivity.

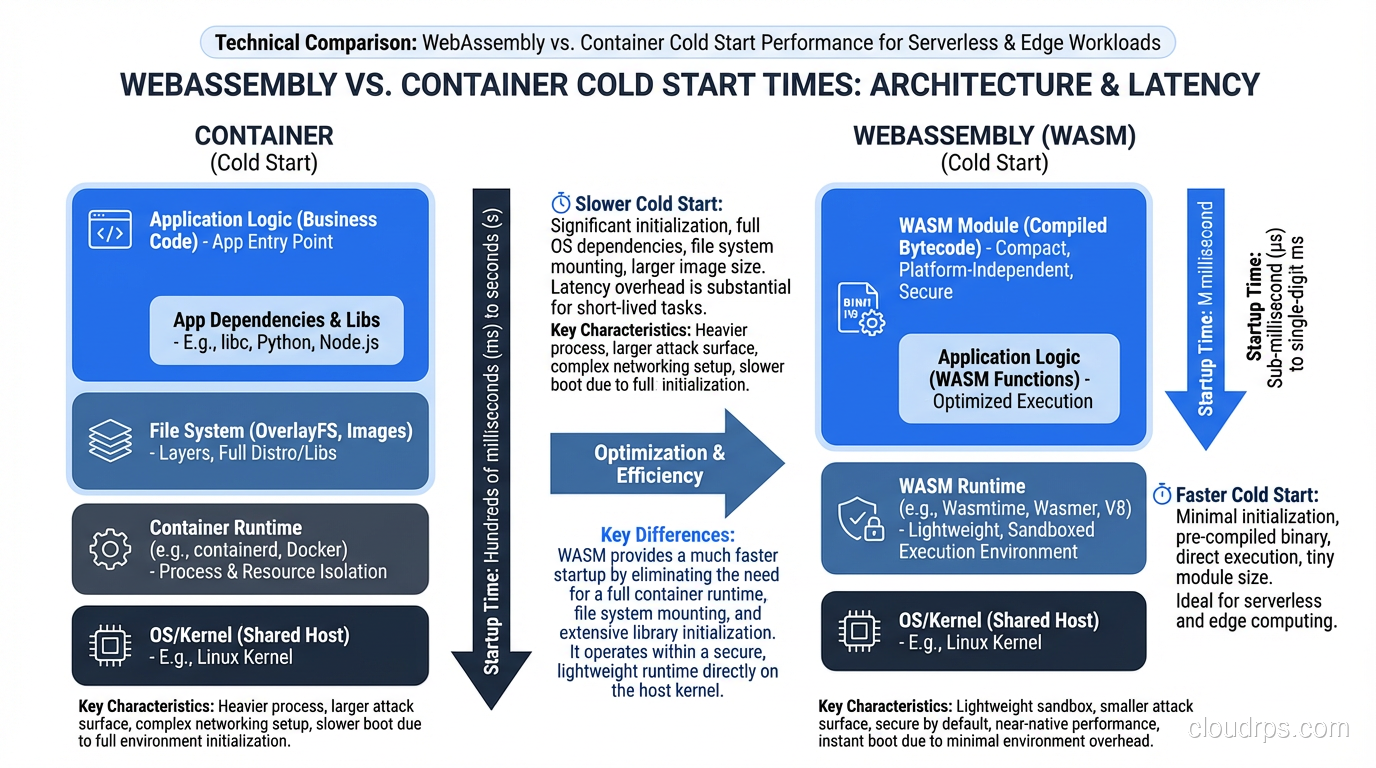

A Docker container cold start involves: pulling the image (network I/O), extracting layers (disk I/O), creating namespaces (kernel operations), starting the init process, running application init code. Even with cached images, a minimal Node.js container cold starts in 200-500ms. A Java Spring Boot container might take 2-5 seconds.

A WASM module cold start: load bytecode (fast, modules are small), compile to native code (fast, AOT or JIT), execute first function. For a well-written WASM module, cold starts in 1-5ms are achievable.

That’s a 100x improvement in cold start latency. For edge computing scenarios where requests arrive unpredictably and you can’t keep instances warm, this matters enormously.

Cloudflare Workers, one of the most successful edge computing platforms, executes JavaScript that’s compiled to WASM in V8’s Ignition/TurboFan engine. Part of why Workers can handle 50ms response time budgets at the edge is the WASM execution model: modules load fast and share a single runtime process safely, unlike containers that need full isolation overhead.

Fermyon’s Spin is the most mature open-source framework for building WASM cloud applications:

# spin.toml

spin_manifest_version = 2

[application]

name = "my-api"

version = "1.0.0"

[[trigger.http]]

route = "/api/..."

component = "api-handler"

[component.api-handler]

source = "target/wasm32-wasi/release/api_handler.wasm"

allowed_outbound_hosts = ["https://api.example.com"]

[component.api-handler.build]

command = "cargo build --target wasm32-wasi --release"

The handler code in Rust:

use spin_sdk::http::{IntoResponse, Request, Response};

use spin_sdk::http_component;

#[http_component]

fn handle_request(req: Request) -> anyhow::Result<impl IntoResponse> {

let body = format!("Hello from WASM: {}", req.uri().path());

Ok(Response::builder()

.status(200)

.header("Content-Type", "text/plain")

.body(body)

.build())

}

Compile with spin build, run with spin up. That’s it. The output is a single .wasm file that runs identically on your laptop, a cloud server, or an edge node. No Dockerfile, no image layers, no container runtime. The module is typically 1-5MB.

WASM on Kubernetes

The Kubernetes integration story for WASM has matured significantly. The containerd runtime can be configured to run WASM workloads using runwasi, a shim that allows Kubernetes to schedule WASM modules the same way it schedules containers.

The key annotation is runtime.class:

apiVersion: v1

kind: Pod

metadata:

name: wasm-workload

spec:

runtimeClassName: wasmtime # or wasmedge

containers:

- name: handler

image: ghcr.io/myorg/my-handler:latest

# The "image" here is an OCI artifact containing a .wasm file

# not a traditional container image

This requires configuring the RuntimeClass in your cluster and installing the appropriate WASM runtime on your nodes. Cloud providers are adding first-class WASM node support: AKS has WASM nodepool support in preview, and several distributions bundle runwasi.

The OCI packaging is clever: WASM modules are packaged as OCI artifacts (they look like container images to the registry) but don’t contain a full filesystem, just the WASM bytecode. This means you can use the same image registries, the same image signing infrastructure, and the same pull policies as regular containers.

The resource efficiency benefits in Kubernetes are real. A Kubernetes node running WASM workloads can run significantly more concurrent functions than the same node running containers, because WASM modules share a runtime process rather than each needing isolated OS-level processes. An experiment from Fermyon showed 10,000 WASM instances running on a single node with modest resource usage, something that’s impossible with containers.

The WASM workloads complement the edge computing architecture story particularly well: you can run the same WASM module at the cloud, at the edge, and on IoT devices without modification, because WASM abstracts the underlying hardware.

WASM vs Containers: When to Use Each

Let me be direct about the tradeoffs, because the WASM hype sometimes outpaces the reality.

Use WASM when:

- Cold start latency matters (edge functions, unpredictable traffic patterns)

- Security isolation beyond container namespaces is required (running untrusted code, multi-tenant plugins)

- Binary portability across architectures is critical (write once, run on x86, ARM, RISC-V)

- Workload is CPU-bound and stateless (request processing, transformation, computation)

- Small binary size matters (IoT/edge deployments with limited storage)

Use containers when:

- You’re running a language ecosystem that doesn’t compile cleanly to WASM (JVM, CPython, .NET have varying WASM support quality)

- Your workload uses system calls or hardware access not yet covered by WASI (GPU, specialized hardware, direct network sockets at L4)

- You need the full Linux environment (package managers, arbitrary filesystem access, cron jobs, etc.)

- Your team is familiar with containers and the operational overhead of WASM is not justified by the benefits

- You have stateful workloads that need persistent filesystem access with complex patterns

The WASI specification (currently WASI Preview 2, heading toward WASI 1.0) is the evolving interface between WASM modules and the host system. It currently defines stable APIs for: filesystem access (controlled), networking (TCP sockets, HTTP client), random number generation, clocks, and some cryptographic operations. WASI does not yet have stable APIs for: GPU access, raw socket creation, most hardware devices.

This means WASM is not a universal container replacement today. It’s a better tool for specific jobs.

The Multi-Tenant Plugin Use Case

One use case where WASM is genuinely revolutionary: running untrusted code from users in a multi-tenant platform.

If you’re building a SaaS platform where customers can deploy their own code (a Shopify app, a Figma plugin, a Cloudflare Worker), you need strong isolation between tenants. Traditional approaches: separate containers (expensive), V8 isolates (JavaScript only), separate VMs (very expensive). WASM gives you strong isolation with minimal overhead in any language that compiles to WASM.

Envoy Proxy uses WASM for extensibility. Users can write custom filters in any language that compiles to WASM, load them dynamically into the proxy, and they run in an isolated WASM sandbox. A buggy or malicious filter can’t access other tenants’ traffic or the host filesystem.

This is why the service mesh world is paying attention to WASM: plugin extensibility without needing to recompile the proxy or worry about memory safety issues from untrusted code.

The security model here is cleaner than what confidential computing provides (which protects data at the hardware level) but more accessible: WASM sandboxing is a software guarantee enforced by the runtime.

Production Readiness Assessment

Where is WASM actually production-ready in cloud infrastructure today?

Mature and production-ready: Edge functions on Cloudflare Workers (JavaScript/WASM), Fastly Compute@Edge (Rust/WASM), and similar CDN edge platforms. These platforms have been running WASM at massive scale for years.

Approaching production-ready: Spin-based microservices for simple HTTP workloads, Envoy WASM filters, single-purpose WASM functions in Kubernetes with runwasi.

Early/experimental: Complex stateful WASM applications, WASM on heterogeneous compute (GPU workloads), full language runtime ports (JVM to WASM).

The toolchain maturity varies by language: Rust compiles to WASM excellently, with full WASI support. C and C++ compile well. Go has experimental WASM support (GOOS=wasip1). Python, Ruby, and JVM languages have partial/experimental support with significant limitations.

For teams evaluating WASM: start with edge computing or serverless use cases where cold start matters. Rust is the best language choice for new WASM development if your team can bear the learning curve. If you’re already using JavaScript/TypeScript, the browser-to-edge portability story is genuinely compelling.

The serverless evolution is being pushed forward by WASM: the millisecond cold starts make serverless viable for latency-sensitive use cases that Lambda/Cloud Run couldn’t handle before. The convergence of WASM, WASI, and cloud-native tooling is making the “compile once, run anywhere” promise closer to real than it’s ever been.

WebAssembly won’t replace containers in the next two years. But for specific workloads, especially at the edge and in multi-tenant extension platforms, it’s already the better choice. Keep it in your toolbox.

Get Cloud Architecture Insights

Practical deep dives on infrastructure, security, and scaling. No spam, no fluff.

By subscribing, you agree to receive emails. Unsubscribe anytime.