Early in my career I implemented real-time notifications in a web application using short polling: the browser sent an HTTP request every five seconds to ask “any new notifications?” The server queried the database, returned results (usually empty), and the browser waited and asked again. It worked. It was also the least efficient way to solve the problem imaginable.

The browser was making 720 requests per hour per user. The database was taking 720 queries per hour per user. With a hundred concurrent users, that was seventy-two thousand database queries per hour to deliver a handful of notifications. When I refactored to long polling, then later to Server-Sent Events, I watched the database load drop by 95% and notification latency go from five seconds to under a second. Understanding which real-time pattern to use is not just a performance optimization; it changes the system’s resource consumption by orders of magnitude.

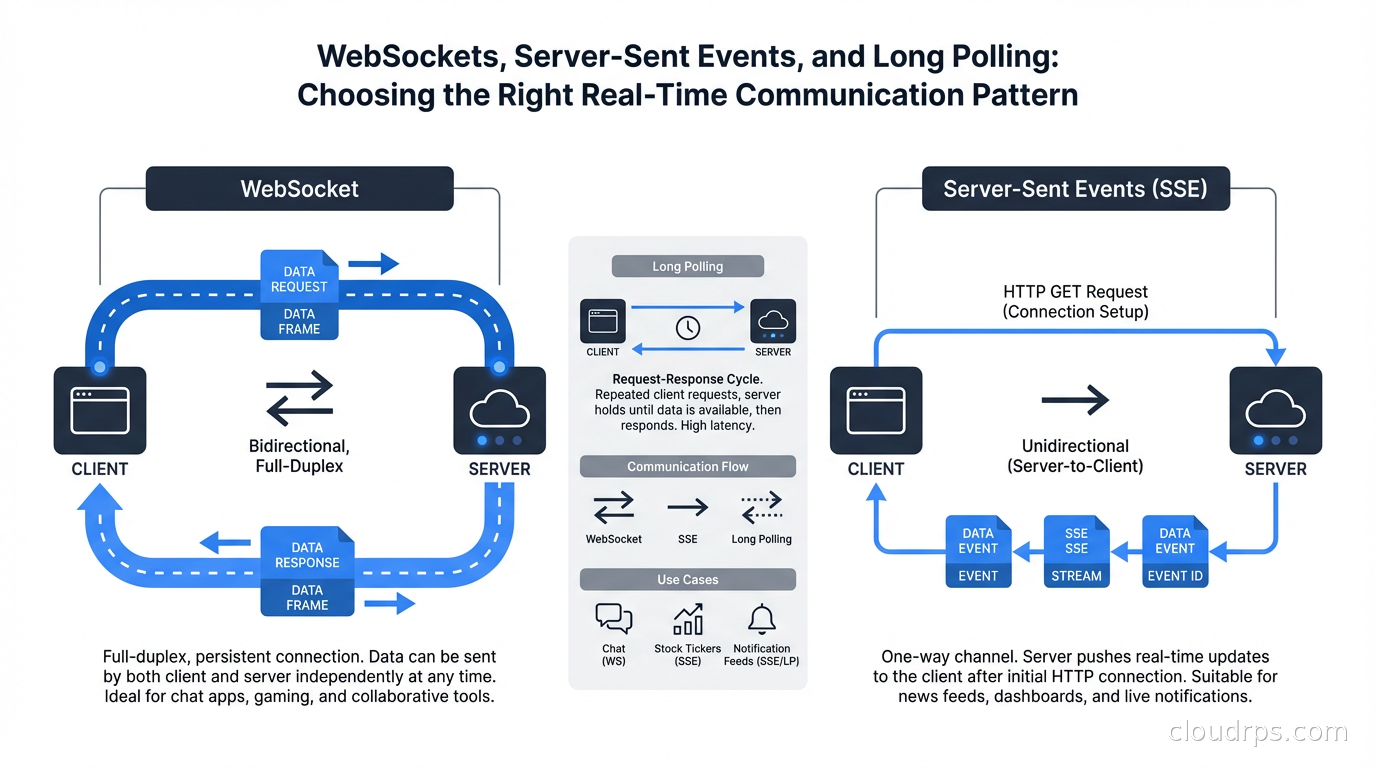

This article covers the three main patterns for server-to-client push: long polling, Server-Sent Events, and WebSockets. I am focused on the architectural trade-offs and when to use each, not implementation syntax (which varies by framework and language).

The Baseline: Short Polling (What Not to Do)

Short polling is worth mentioning as the baseline so we can clearly describe what we are improving over. The client sends HTTP requests on a timer, regardless of whether the server has new data. It is simple to implement (it is just regular HTTP requests), works with any server, and requires no special protocol support.

The problems: every request has HTTP overhead (headers, connection setup if keep-alive is not active). Most requests return empty responses. The latency is bounded by the polling interval, not by when the event actually occurs. At any meaningful scale, short polling creates unnecessary load on every layer of your stack.

Use short polling only for low-frequency checks (every few minutes) or for quick prototypes where you need something working in an hour and will replace it later. Do not use it for user-facing real-time features.

Long Polling: HTTP With Held Responses

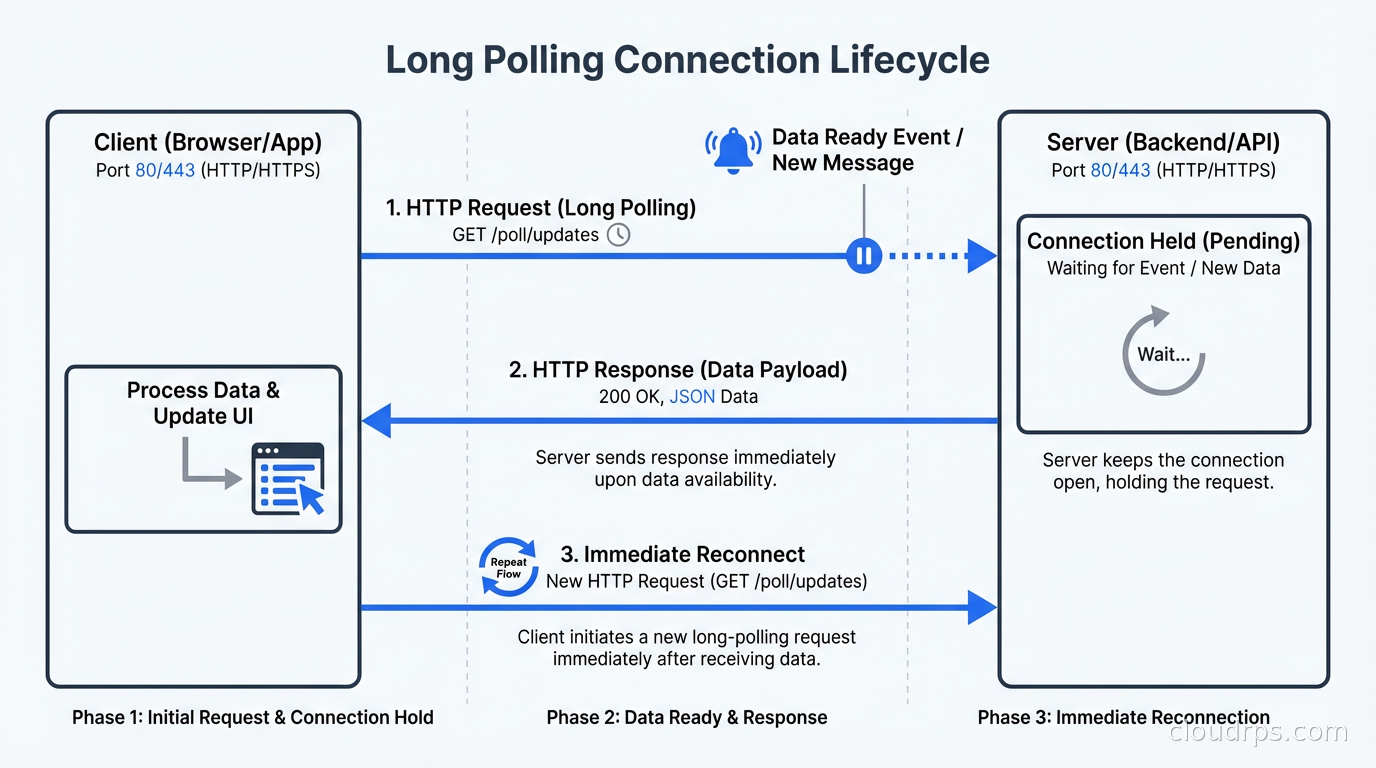

Long polling is the first real improvement over short polling. The client sends an HTTP request to the server, but instead of responding immediately, the server holds the connection open until it has new data to send or a timeout expires. When the server has data, it responds. The client immediately sends a new request, establishing the next held connection.

From the client’s perspective, there is always a pending request. When the server has data, the response arrives nearly instantly (bounded only by network latency, not polling interval). The latency is dramatically lower than short polling because you are not waiting for the next poll interval.

The server holds open HTTP connections, which consumes resources. Traditional thread-per-connection servers (Apache with prefork MPM, older Java application servers) handled this poorly: each held connection consumed a thread, and threads are expensive. Modern async servers (Node.js, nginx, modern Java with virtual threads, Go) handle large numbers of held connections efficiently because they do not require a thread per connection.

Long polling has natural retry and error handling semantics. If the connection drops, the client reconnects and sends a new request. This happens transparently, exactly like a normal HTTP request. Proxies, firewalls, and load balancers all understand HTTP, so long polling works in environments where WebSockets might be blocked.

The overhead per message is higher than WebSockets or SSE because each message delivery requires establishing a new HTTP request (with headers, potential TLS handshake if not reusing connections, HTTP/1.1 keep-alive or HTTP/2 stream setup). For high-frequency message delivery, this overhead adds up. For low-frequency delivery (a message every few seconds or minutes), it is negligible.

Use long polling when: You need broad compatibility, your infrastructure blocks WebSockets, you have low message frequency, or you want the simplicity of HTTP semantics for retry handling.

Server-Sent Events: One-Way Push Over HTTP

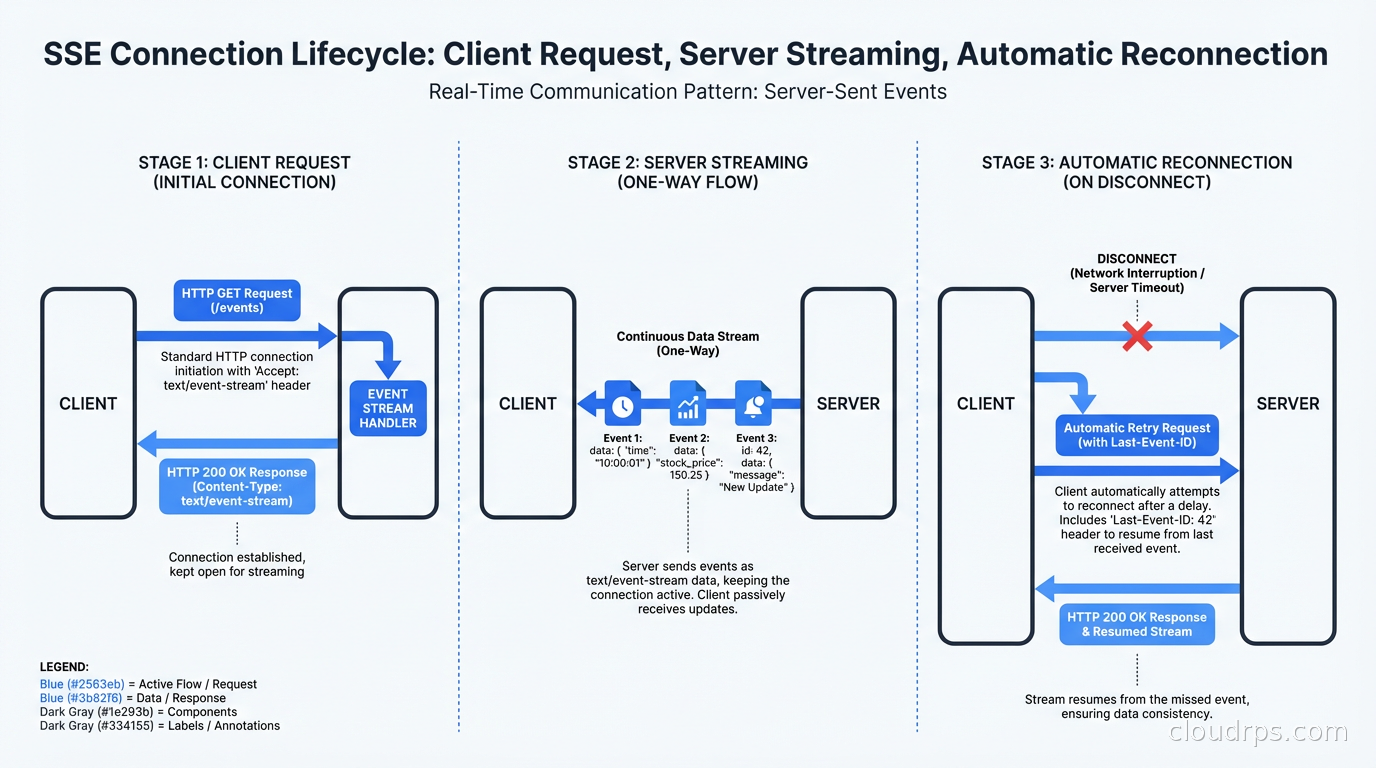

Server-Sent Events is an HTTP-based protocol specifically designed for server-to-client streaming. The client sends a standard HTTP GET request with an Accept: text/event-stream header. The server responds with Content-Type: text/event-stream and keeps the response body open, sending data whenever new events are available.

The wire format is simple text: data: {"message": "hello"}\n\n. Events can have optional id and event-type fields. The id enables automatic reconnection: if the connection drops, the browser automatically reconnects and sends a Last-Event-ID header, so the server knows where the client left off and can replay missed events.

The browser’s native EventSource API handles SSE natively with automatic reconnection, built-in event parsing, and the last-event-id tracking. This makes SSE particularly simple to implement on the client side:

const source = new EventSource('/events');

source.onmessage = (event) => {

const data = JSON.parse(event.data);

updateUI(data);

};

source.onerror = () => {

// EventSource handles reconnection automatically

};

SSE is one-directional: server to client. The client cannot send data over the SSE connection. For use cases where the client only needs to receive server-sent updates (notifications, live feed updates, progress tracking, dashboard metrics), this is fine. The client sends commands or updates via regular HTTP requests, and SSE delivers the results.

One important limitation: browsers limit the number of simultaneous SSE connections per domain. HTTP/1.1 allows only six concurrent connections per origin, and SSE connections count against this limit. If a user has the same site open in multiple tabs, they can run into this limit. HTTP/2 multiplexes multiple SSE streams over a single connection and avoids this issue entirely. Ensure your infrastructure supports HTTP/2 for SSE-heavy applications.

SSE works naturally with TLS and HTTPS. Since it uses standard HTTP, your existing TLS termination infrastructure, CDNs, and reverse proxies handle SSE without modification. This is a significant operational advantage over WebSockets.

Use SSE when: Your communication is primarily server-to-client, you want browser-native support with automatic reconnection, you need simple infrastructure compatibility, or you are building dashboards, live feeds, notification systems, or progress indicators.

WebSockets: Full-Duplex Binary or Text Frames

WebSockets provide a persistent, full-duplex connection between client and server over a single TCP connection. Unlike HTTP, WebSocket connections are truly bidirectional: either side can send messages at any time without waiting for a request from the other side.

The WebSocket handshake starts as an HTTP/1.1 upgrade request. The server responds with 101 Switching Protocols, and the connection is upgraded from HTTP to the WebSocket protocol. After the upgrade, both sides communicate using lightweight frames (as small as two bytes of overhead per message, compared to hundreds of bytes for HTTP headers).

The frame-based binary protocol enables efficient delivery of any content type: JSON text, binary data, images, compressed data. The WebSocket protocol itself handles framing, fragmentation of large messages, ping/pong keepalives, and graceful closure. Application-level protocols (your JSON message schema, protobuf definitions, etc.) are layered above WebSocket.

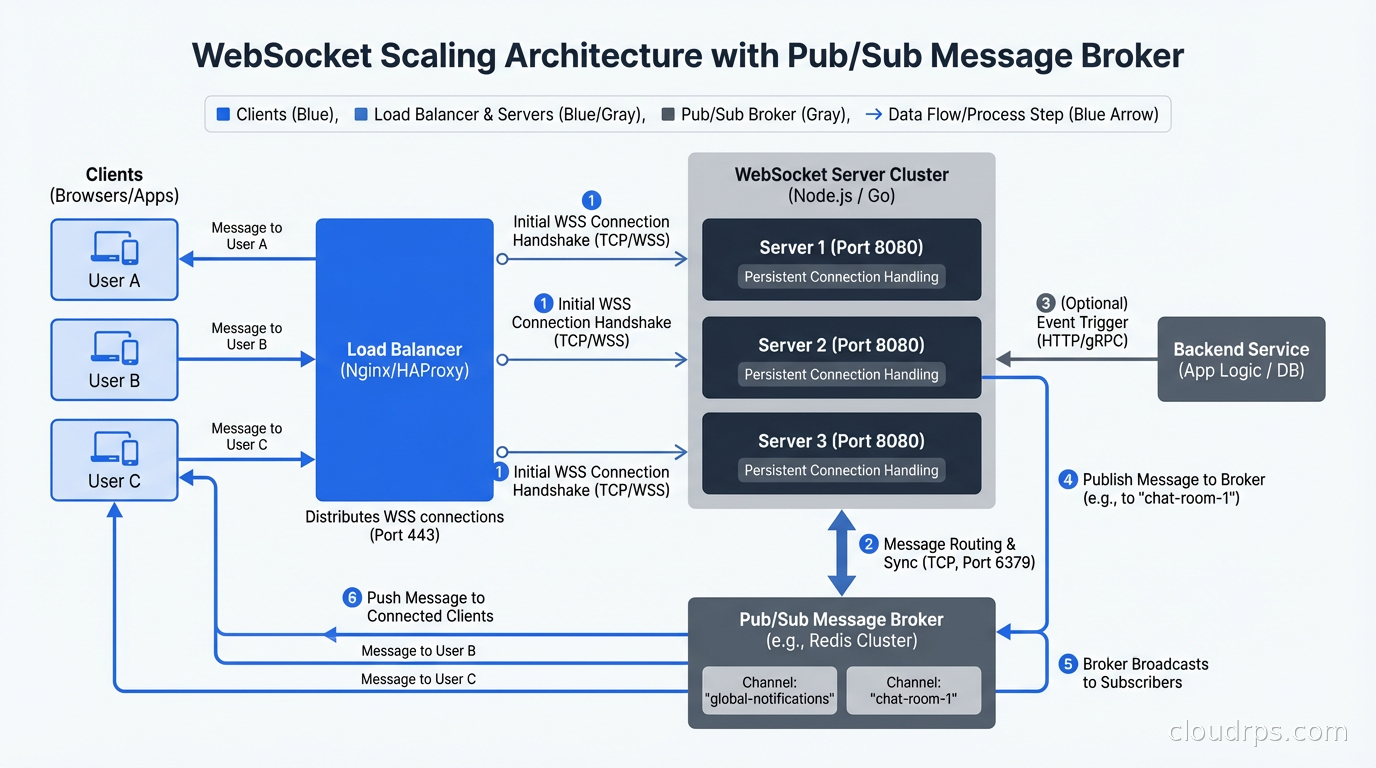

The server resource model for WebSockets is similar to long polling: each connection holds a socket open. The difference is that WebSocket connections are designed to be long-lived (hours to days), whereas long-polling connections cycle frequently. A chat application where users maintain a WebSocket connection for their entire session requires your server to handle thousands of concurrent open sockets, each consuming a file descriptor and small amount of memory.

The infrastructure challenges with WebSockets deserve attention. Load balancers must support WebSocket proxying (sticky sessions or a stateless architecture). Many CDNs have limited or no WebSocket support (Cloudflare supports WebSocket passthrough; many caching CDNs do not). Reverse proxies like nginx require explicit WebSocket configuration. Corporate firewalls sometimes block or time out WebSocket connections that look like idle HTTP connections.

For gRPC-based microservices that need browser-accessible streaming, gRPC-Web uses a similar model to SSE (HTTP/1.1 or HTTP/2 with content streaming) because WebSockets are not available in the gRPC-Web spec. This is an example where the choice of real-time pattern is constrained by other architectural decisions.

Use WebSockets when: You need true bidirectional real-time communication (collaborative editing, multiplayer games, live trading platforms, interactive chat with typing indicators), you need binary data transfer, you need very low per-message overhead, or you have low latency requirements for client-to-server messages.

Infrastructure Implications at Scale

At scale, each pattern has different scaling characteristics.

Long polling and SSE are HTTP requests from the server’s perspective. Horizontal scaling of your HTTP servers handles increased connection counts. Stateless servers work naturally if your event delivery can be decoupled from the server that holds the connection (using a message broker like Redis pub/sub, Kafka, or similar).

WebSockets require connection state. If user A’s WebSocket is connected to server instance 1 and user B’s WebSocket is to server instance 2, a message that user A sends to user B must be routed from instance 1 to instance 2. The standard solution is a pub/sub broker: each server subscribes to channels for its connected users, and publishes messages to channels that other servers can receive. This makes WebSocket servers stateless at the application level despite the stateful connection.

The service mesh layer needs configuration for long-lived connections. Default timeouts that terminate idle HTTP connections will kill SSE and WebSocket connections that have no traffic for a few minutes. Configure connection timeouts on your proxies and load balancers to be much longer than the maximum expected message interval.

Connection counts become a resource constraint at high scale. A server process handling 100,000 concurrent WebSocket connections needs to be tuned for that: file descriptor limits increased, TCP buffer sizes configured, kernel connection tracking tables sized appropriately. Test your server’s behavior at target connection counts before production.

Choosing: A Decision Framework

The decision tree I use:

Does the client need to send messages in real time (not just receive)? If yes, WebSockets. If no, continue.

Do you need broad infrastructure compatibility (corporate proxies, basic CDNs, firewalls)? If yes, SSE or long polling. If no, WebSockets remains viable.

How frequent are server-to-client messages? Less than once per second: SSE or long polling both work. More than once per second with many connections: SSE has lower per-message overhead than long polling.

Do you need the browser’s built-in reconnection handling and last-event-id support? If yes, SSE. If your application handles reconnection logic itself, long polling is equivalent.

Are you building notifications, live feeds, progress bars, dashboards? SSE. Collaborative editing, gaming, live trading? WebSockets. Simple polling for low-frequency status updates? Long polling.

The right answer for most web applications today is SSE for server-to-client features and WebSockets for bidirectional features. Long polling remains relevant for compatibility scenarios and very low-frequency updates. Short polling is almost never the right answer.

Most modern web frameworks have first-class support for all three patterns. The implementation complexity difference is small. Choose based on the semantic requirements of your feature, not on which pattern you are most familiar with. Your infrastructure and your users will thank you for picking the right tool.

Get Cloud Architecture Insights

Practical deep dives on infrastructure, security, and scaling. No spam, no fluff.

By subscribing, you agree to receive emails. Unsubscribe anytime.