I spent the better part of 2019 migrating a financial services client off OpenVPN. They had 40 office locations, a sprawling AWS VPC topology, and a VPN infrastructure that had accumulated two decades of configuration cruft. The OpenVPN daemons were leaking memory. Certificate rotation was manual. The throughput was embarrassing compared to what the underlying hardware could do. When I told them we were switching to WireGuard, the CISO asked me if it was really production-ready. “It’s in the Linux kernel,” I said. That ended the conversation.

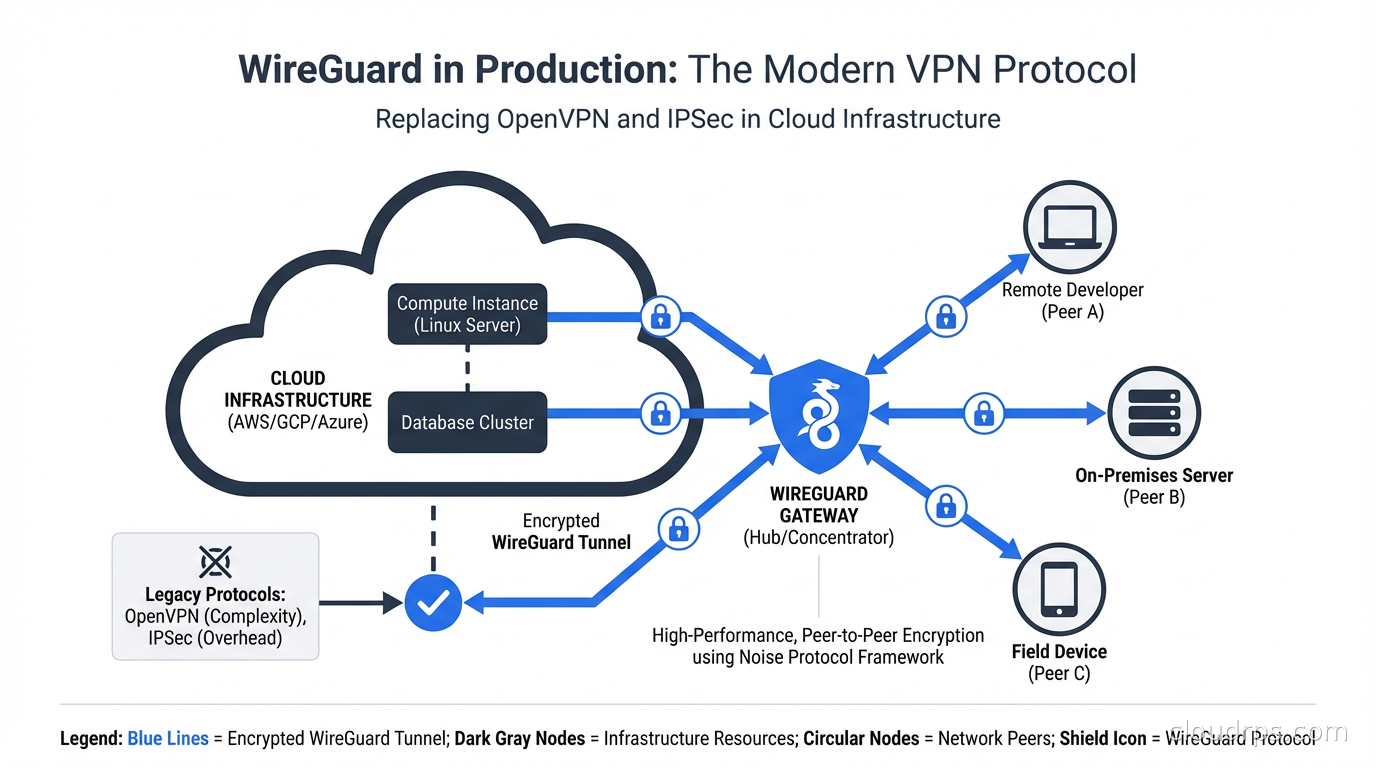

Five years later, WireGuard is not just production-ready. It is the de facto standard for cloud networking teams who care about performance, auditability, and operational simplicity. Cilium uses it for pod-to-pod encryption. Tailscale built a billion-dollar business on top of it. Kubernetes CNI plugins have native WireGuard backends. If you are still running OpenVPN for cloud infrastructure access in 2025, you owe yourself a serious look at what you are leaving on the table.

What Makes WireGuard Different

WireGuard is a VPN protocol, but that description undersells how radically different its design philosophy is from everything that came before it.

OpenVPN has roughly 400,000 lines of code. IPSec with its constellation of IKE daemons (strongSwan, Libreswan, racoon) is similarly sprawling. WireGuard’s kernel implementation was, at initial release, around 4,000 lines. The current codebase is still under 10,000 lines including the userspace tooling. This is not a minor implementation detail. Every line of code is a potential vulnerability surface. When your security-critical software is auditable by a single engineer in an afternoon, that is a feature, not a limitation.

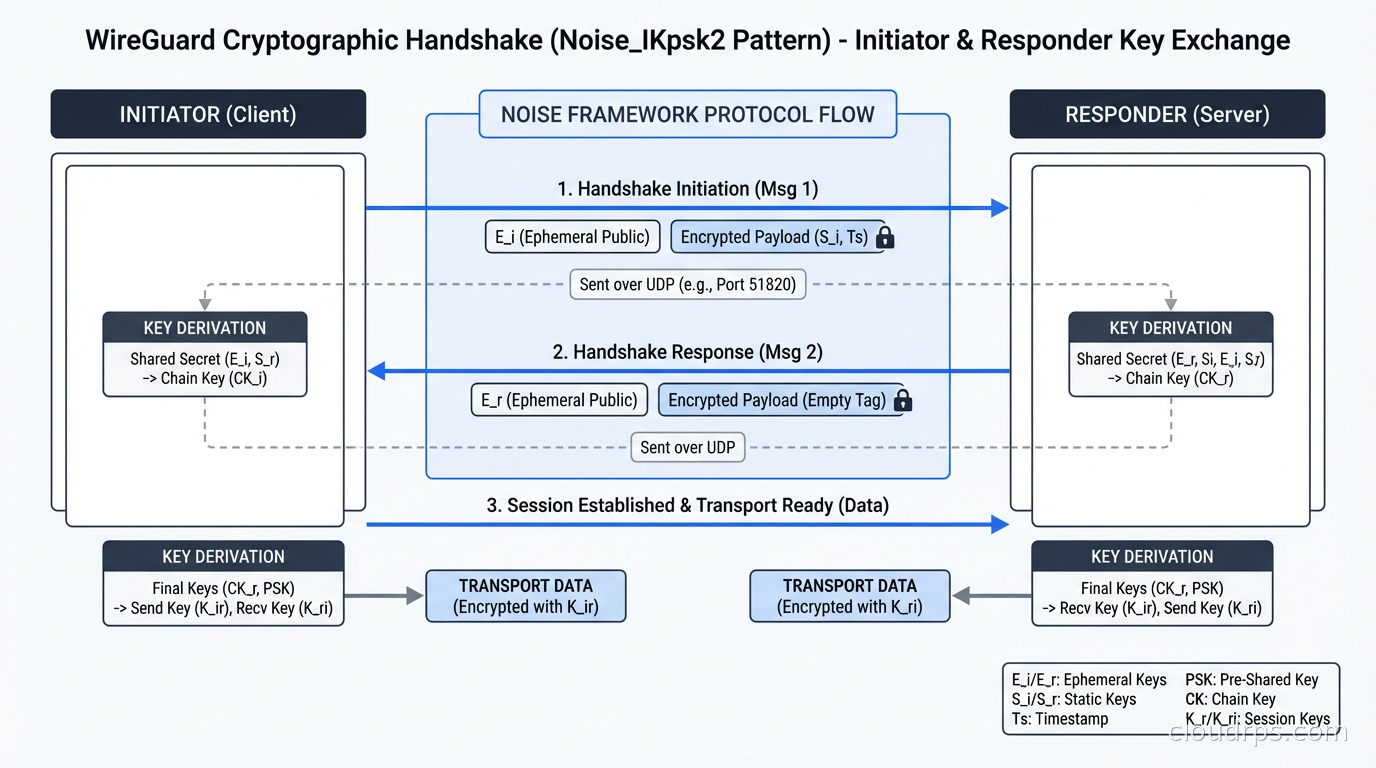

The protocol makes opinionated cryptographic choices and then sticks with them:

- Curve25519 for key exchange (Elliptic Diffie-Hellman)

- ChaCha20 for symmetric encryption

- Poly1305 for message authentication

- BLAKE2s for hashing

- SipHash24 for hashtable keys

- HKDF for key derivation

There is no negotiation. No cipher suites. No TLS handshake that can be downgraded through misconfiguration to RC4. WireGuard ships with one set of cryptographic primitives and updates them with the protocol version when something better comes along. Compare that to TLS’s historical track record, where the flexibility to negotiate ciphers created decades of vulnerabilities: BEAST, POODLE, FREAK, Logjam.

This is the Noise protocol framework, specifically the Noise_IKpsk2 handshake pattern. WireGuard completes a full handshake in under a millisecond, compared to OpenVPN’s several-hundred-millisecond TLS handshake. This latency difference matters enormously when you are initializing thousands of connections across a Kubernetes cluster.

The Peer Model: Simpler Than You Think

WireGuard has no concept of a client and server in the traditional sense. Every node is a peer. Each peer has a public and private key pair (generated with wg genkey and wg pubkey) and an optional pre-shared key for post-quantum resistance.

Configuration looks like this:

[Interface]

Address = 10.200.0.1/24

ListenPort = 51820

PrivateKey = <base64-private-key>

[Peer]

PublicKey = <base64-peer-public-key>

AllowedIPs = 10.200.0.2/32

Endpoint = 203.0.113.5:51820

PersistentKeepalive = 25

AllowedIPs is the routing filter. WireGuard will only accept packets from a peer that match the source IP against that peer’s AllowedIPs, and only route outbound packets to that peer if the destination matches. This replaces the complex firewall rules you had to layer on top of OpenVPN to prevent lateral movement.

PersistentKeepalive keeps NAT mappings alive. In cloud environments where your instances are behind NAT (every AWS EC2 instance not in a public subnet, for example), setting this to 25 seconds is standard practice.

There is no persistent connection state. WireGuard is stateless at the session level. Peers exchange handshakes every few minutes (default 180 seconds) to generate fresh session keys. If a peer disappears, there is nothing to clean up. No connection pool. No half-open socket waiting for a timeout. This is why WireGuard works seamlessly on laptops that sleep and wake, jumping between coffee shop WiFi and corporate networks, in ways that OpenVPN never could.

Performance Numbers That Actually Matter

In my twenty years of running VPN infrastructure, I have never seen performance improvements this dramatic from a protocol swap. WireGuard runs in the Linux kernel, which means it processes packets in kernel space rather than paying the cost of copying data back and forth to userspace for every packet like OpenVPN does.

Benchmarks vary by hardware, but you should expect:

- Throughput: WireGuard saturates 10 Gbps links on modern hardware. OpenVPN on the same hardware typically peaks at 600-800 Mbps.

- Latency: WireGuard adds under 0.1ms of overhead. OpenVPN adds 1-2ms.

- CPU: WireGuard uses ChaCha20-Poly1305 which is hardware-accelerated on virtually every modern CPU. The userspace overhead of OpenVPN swamps any cipher efficiency gains.

For interactive developer access to production environments, the throughput numbers are less important than latency. When you are running database queries or SSHing into a box and running vim, the round-trip latency of every keystroke adds up. WireGuard’s sub-millisecond overhead makes remote access feel local in ways that OpenVPN simply does not.

Cloud Topology Patterns

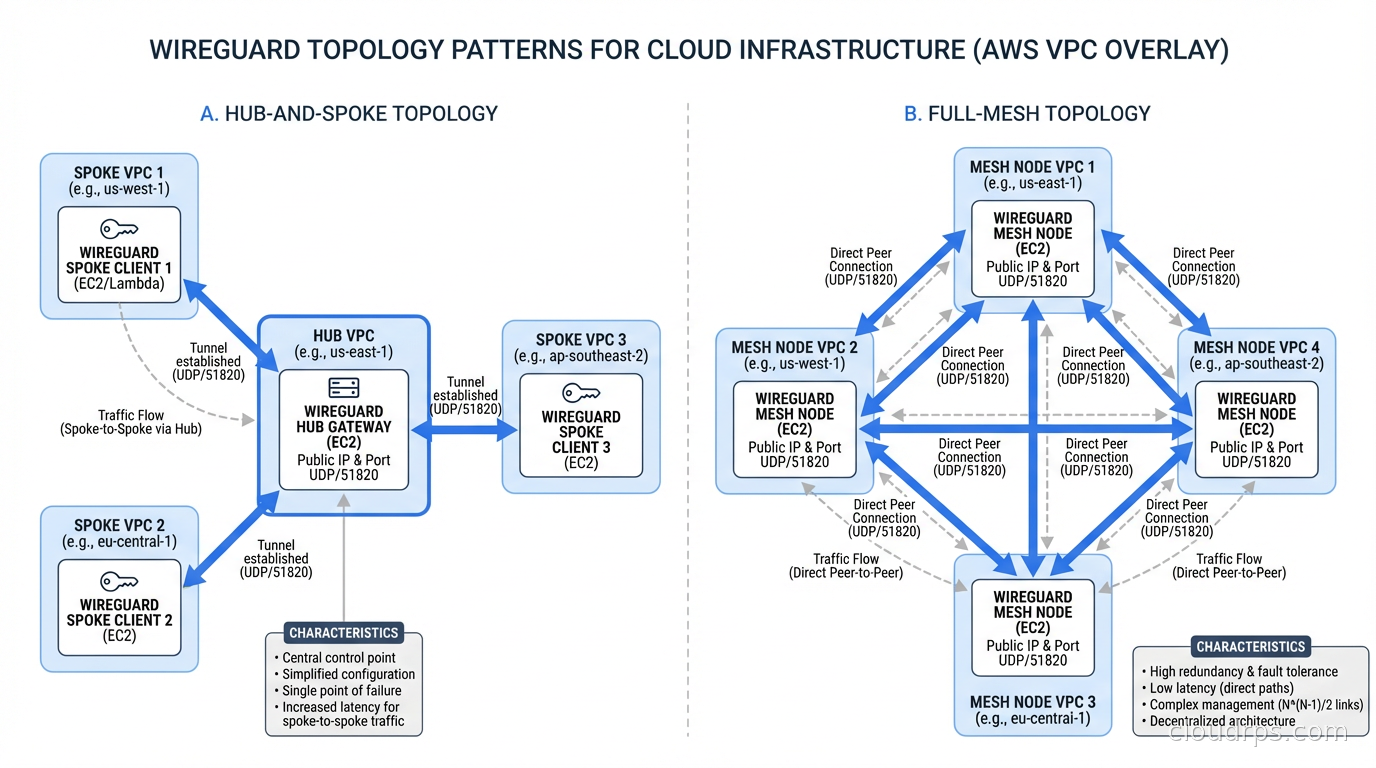

WireGuard supports two primary topology patterns, and which one you choose depends on your scale and connectivity model.

Hub-and-spoke: A central server (or cluster of servers for HA) holds the AllowedIPs for all peers and routes traffic between them. Peers only need the public key and endpoint of the hub. Simple to manage, single point of control, but the hub becomes a bottleneck and a failure domain.

Full mesh: Every peer has the public key and endpoint of every other peer. Traffic flows directly between peers without going through a hub. Better performance, no central bottleneck, but configuration complexity scales as O(n^2). At 10 nodes, a full mesh means 45 peer relationships. At 100 nodes, it is 4,950. You need automation at that scale.

This is the fundamental problem that tools like Tailscale (commercial, hosted control plane), Headscale (open-source self-hosted Tailscale control plane), and Netmaker (open-source mesh network manager) solve. They handle key distribution, peer discovery, and configuration synchronization, leaving WireGuard to do what it does well: encrypt and route packets.

For cloud infrastructure, I typically recommend a hub-and-spoke model for developer access (the hub lives in a central VPC or on dedicated EC2 instances) and a full mesh for inter-service communication where you want direct paths between nodes without a chokepoint.

If you need secure connectivity between AWS VPCs across regions, read how VPC peering, Transit Gateway, and PrivateLink work before layering WireGuard on top. WireGuard complements but does not replace AWS-native connectivity primitives. For cross-cloud or hybrid connectivity that AWS networking cannot handle natively, WireGuard meshes fill the gap cleanly.

WireGuard in Kubernetes

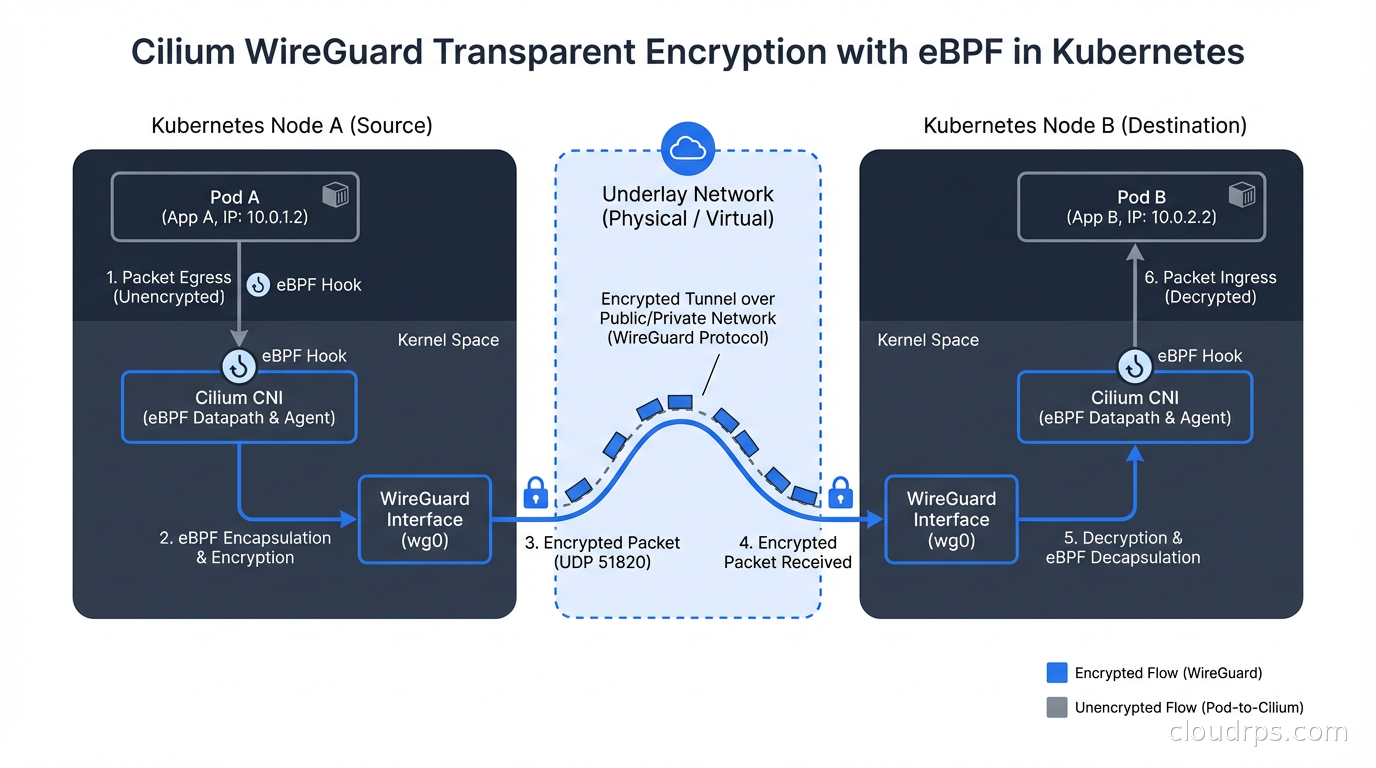

This is where WireGuard has moved from “interesting protocol” to “essential infrastructure component.” Kubernetes needs encryption between nodes for two reasons: defense in depth (you cannot fully trust the underlying network provider) and compliance (regulations requiring data-in-transit encryption are common in finance and healthcare).

The traditional answer was IPSec overlays, which worked but were notoriously painful to operate. WireGuard has largely replaced them.

Cilium is the most prominent example. Cilium is a Kubernetes CNI plugin built on eBPF that offers WireGuard as a transparent encryption mode. You enable it with a single Helm value:

encryption:

enabled: true

type: wireguard

Cilium then automatically generates WireGuard key pairs for each node, distributes them through a Kubernetes CRD, and encrypts all pod-to-pod traffic crossing node boundaries. The eBPF programs in the kernel intercept packets before they hit the NIC and pass them through WireGuard before transmission. There is no per-pod sidecar, no extra latency hop, no TCP connection overhead. This is why Cilium with WireGuard encryption is dramatically lower overhead than Istio with mutual TLS. For more on how eBPF enables kernel-level packet interception, see the deep dive.

Flannel also has a native WireGuard backend, configured by setting Backend.Type to wireguard in the Flannel ConfigMap. It is simpler than Cilium but lacks Cilium’s network policy enforcement and observability capabilities.

Kilo is worth knowing if you are running multi-cluster or edge Kubernetes deployments. Kilo creates a WireGuard mesh between Kubernetes nodes across different networks or cloud providers, enabling cross-cluster pod networking without requiring VPC peering or cloud-provider-specific connectivity solutions.

For teams running Kubernetes who have worked through network policies and CNI plugins, WireGuard encryption is the natural complement to network policy enforcement. Policy controls which pods can talk to which. WireGuard ensures those conversations are encrypted even when they traverse untrusted network segments.

Replacing Bastion Hosts with WireGuard

One of the highest-leverage changes I have made in production environments is replacing traditional bastion host architectures with WireGuard. The classic bastion pattern has operational problems that accumulate over time: SSH key management, jump host availability, audit logging complexity, and the latency of double-hopping SSH connections.

WireGuard offers a cleaner model. Every engineer’s laptop becomes a peer. The WireGuard gateway (running on a small EC2 instance or a pair for HA) has AllowedIPs configured to cover the private subnet CIDR ranges of your VPCs. Once connected, engineers have native network access to private resources without SSH tunneling.

The critical operational requirement is key revocation. Since WireGuard has no PKI, you revoke access by removing a peer’s public key from the gateway configuration. With proper automation (I use a simple API backed by a database of approved public keys, with a CI pipeline to generate and apply configurations), this can happen in under a minute. That is faster than most PKI-based revocation workflows.

This approach aligns naturally with zero trust security principles. The network access granted through WireGuard is narrow (specific AllowedIPs ranges, specific ports controlled by security groups downstream), time-limited if you automate key expiry, and auditable through VPC flow logs that capture WireGuard traffic the same as any other traffic.

Tailscale, Headscale, and Netmaker

If you are not building a custom WireGuard deployment, the tools built on top of WireGuard are worth understanding.

Tailscale uses WireGuard under the hood with a centrally managed control plane that handles peer discovery, NAT traversal (their DERP relay network for when direct connections cannot be established), ACL enforcement, and device authentication through an identity provider. For small teams or developer access scenarios, Tailscale’s free tier covers 100 devices and the operator experience is exceptional. The downside is that your control plane lives on Tailscale’s infrastructure.

Headscale is an open-source reimplementation of the Tailscale control plane. You self-host it, you own the peer database, you control the key rotation schedule. I run Headscale for clients who need the Tailscale experience without the dependency on a third-party SaaS. The Headscale project maintains compatibility with the Tailscale client apps, so engineers use the same CLI they are familiar with.

Netmaker takes a different approach: it is a full WireGuard network management platform with its own clients, a REST API for configuration, and support for Kubernetes gateway nodes. It handles the peer-to-peer hole punching that raw WireGuard cannot do. WireGuard requires you to know peer endpoints in advance; NAT traversal requires either a relay or a protocol for discovering external endpoints.

For pure cloud-to-cloud connectivity where all endpoints have static public IPs or DNS names, raw WireGuard with Terraform-generated configurations works well. For developer access scenarios with laptops behind home NAT routers, you need Tailscale, Headscale, or a relay setup.

What WireGuard Does Not Solve

After twenty years in this industry, I have watched too many teams adopt a new tool as the answer to every problem and then discover the edges. WireGuard has real limitations worth understanding before you commit.

No built-in PKI: WireGuard uses raw public keys. There is no certificate hierarchy, no OCSP, no CRL. Key distribution and revocation are your problem entirely. For small deployments this is manageable. For enterprise scale with hundreds of peers, you need tooling around it.

No dynamic routing: WireGuard does not run BGP or OSPF. AllowedIPs are static configuration. If your network topology changes frequently (common in environments with auto-scaling groups), you need automation to keep configurations current. This is solvable but requires engineering investment.

No per-connection logging at the protocol level: WireGuard’s kernel implementation logs at the handshake level, not the connection level. If you need per-connection audit trails, you need to implement this in your overlay tooling or rely on VPC flow logs for coarser-grained visibility.

User-space implementations have different performance profiles: On platforms where WireGuard does not have kernel support (some older kernels, some BSDs, Windows), user-space implementations like wireguard-go are available but lose most of the performance advantage over OpenVPN.

For service mesh use cases where you need mutual TLS with per-service certificates, automatic rotation, and rich L7 observability, a dedicated service mesh is still the better tool. See the article on Istio ambient mesh for how sidecarless mTLS compares. WireGuard is L3 encryption; it knows nothing about HTTP, gRPC, or application-layer protocols.

Production Deployment Checklist

Before you go live with WireGuard in a production environment, work through these operational requirements.

Key management: Generate keys with wg genkey, store private keys in HashiCorp Vault or AWS Secrets Manager, and never commit them to source control. Rotate gateway keys on a defined schedule. Quarterly is common for gateway keys; engineer keys should rotate when engineers leave or on a shorter cycle in high-security environments.

MTU configuration: WireGuard adds 60 bytes of overhead to each packet. If your underlying MTU is 1500 (standard Ethernet), your WireGuard interface MTU should be 1420. Getting this wrong causes intermittent failures with large payloads that pass small-packet tests. This trips up nearly everyone’s first production deployment.

Firewall rules: WireGuard uses UDP. Your security groups or firewall rules need to allow inbound UDP on your WireGuard port (default 51820, but use a non-standard port in hostile network environments). TCP-only egress proxies will break WireGuard entirely.

Monitoring: Export WireGuard metrics with wg show metrics and ship them to Prometheus. The key metrics are latest handshake time (if a peer has not handshaked in more than three minutes, it is offline) and transfer bytes (a flat line means the tunnel is dead or idle).

High availability: WireGuard itself has no HA mechanism. For gateway HA, run two gateway nodes with the same private key (this is allowed in WireGuard’s model) behind a UDP-capable load balancer or use BGP anycast routing to route to the nearest healthy gateway.

The encryption principles that govern data-in-transit protection apply here too. WireGuard handles in-transit encryption at the network layer. You still need encryption at rest for data written to disk, and you still need proper TLS for application-layer communication with external services. These are complementary, not redundant.

The Verdict

WireGuard is not a magic solution to every networking problem. It is a genuinely excellent kernel-level VPN protocol with a clean cryptographic design, exceptional performance, and growing native support across the cloud-native ecosystem. For developer access to cloud infrastructure, inter-VPC connectivity where AWS-native options do not fit, and Kubernetes node-to-node encryption, it is my first choice in 2025.

The question is not whether to use WireGuard. The question is whether to manage it yourself or use a tool like Tailscale or Headscale that handles the operational complexity of key distribution and peer discovery. For most teams, the answer is Headscale self-hosted or raw WireGuard with Terraform automation. For teams that want zero operational burden on the control plane and are comfortable with a SaaS dependency, Tailscale is excellent.

If you are still running OpenVPN in production, start with a pilot. Pick one use case (developer laptop access is the easiest), deploy WireGuard alongside OpenVPN for a month, and let the performance metrics make the argument for you. They always do.

Get Cloud Architecture Insights

Practical deep dives on infrastructure, security, and scaling. No spam, no fluff.

By subscribing, you agree to receive emails. Unsubscribe anytime.