Cloud Architecture

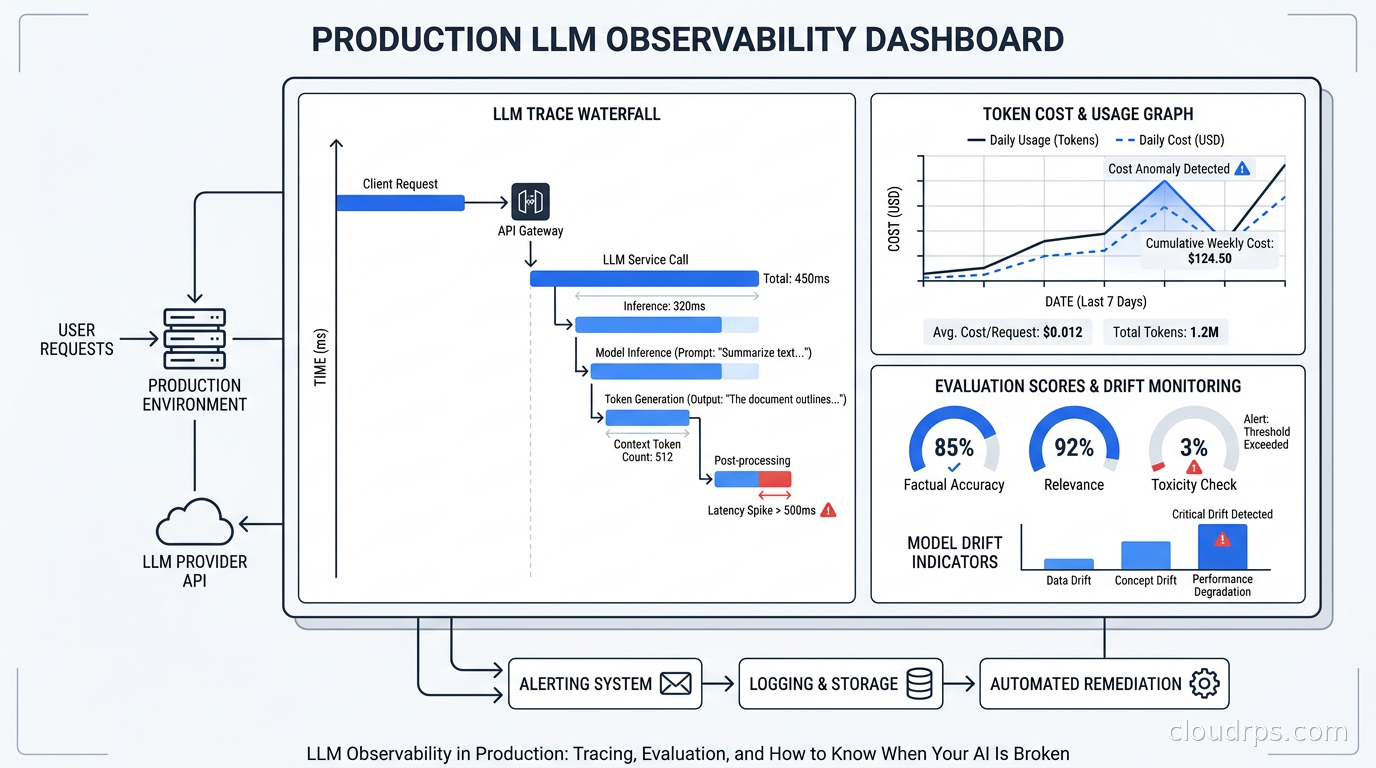

LLM Observability in Production: Tracing, Evaluation, and How to Know When Your AI Is Broken

A practical guide to instrumenting LLM applications with OpenTelemetry GenAI semantic conventions, choosing between Langfuse, LangSmith, and Arize Phoenix, tracking token costs, and running evaluation in production.