Cloud Architecture

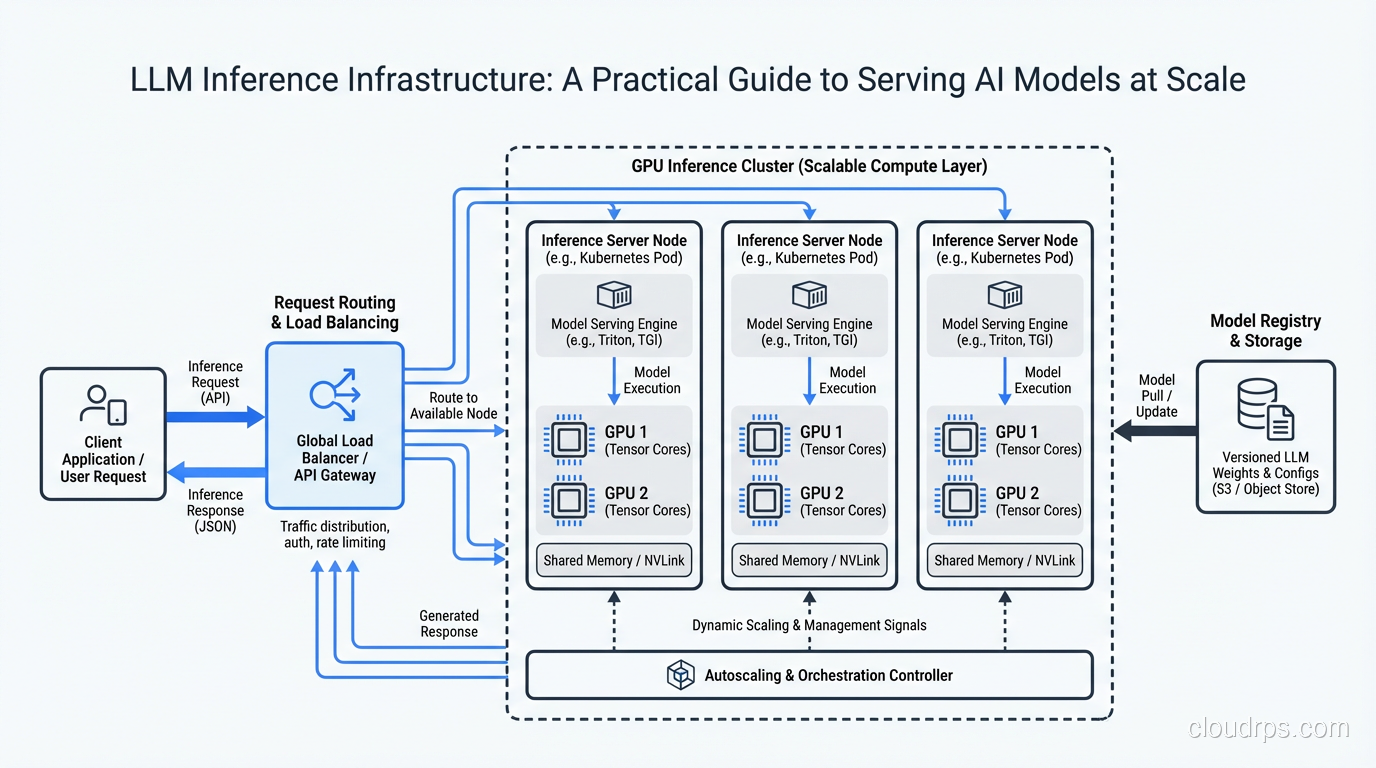

LLM Inference Infrastructure: A Practical Guide to Serving AI Models at Scale

How to build production-ready LLM inference infrastructure: GPU selection, model serving frameworks, batching strategies, and cost optimization for AI workloads.