Cloud Architecture

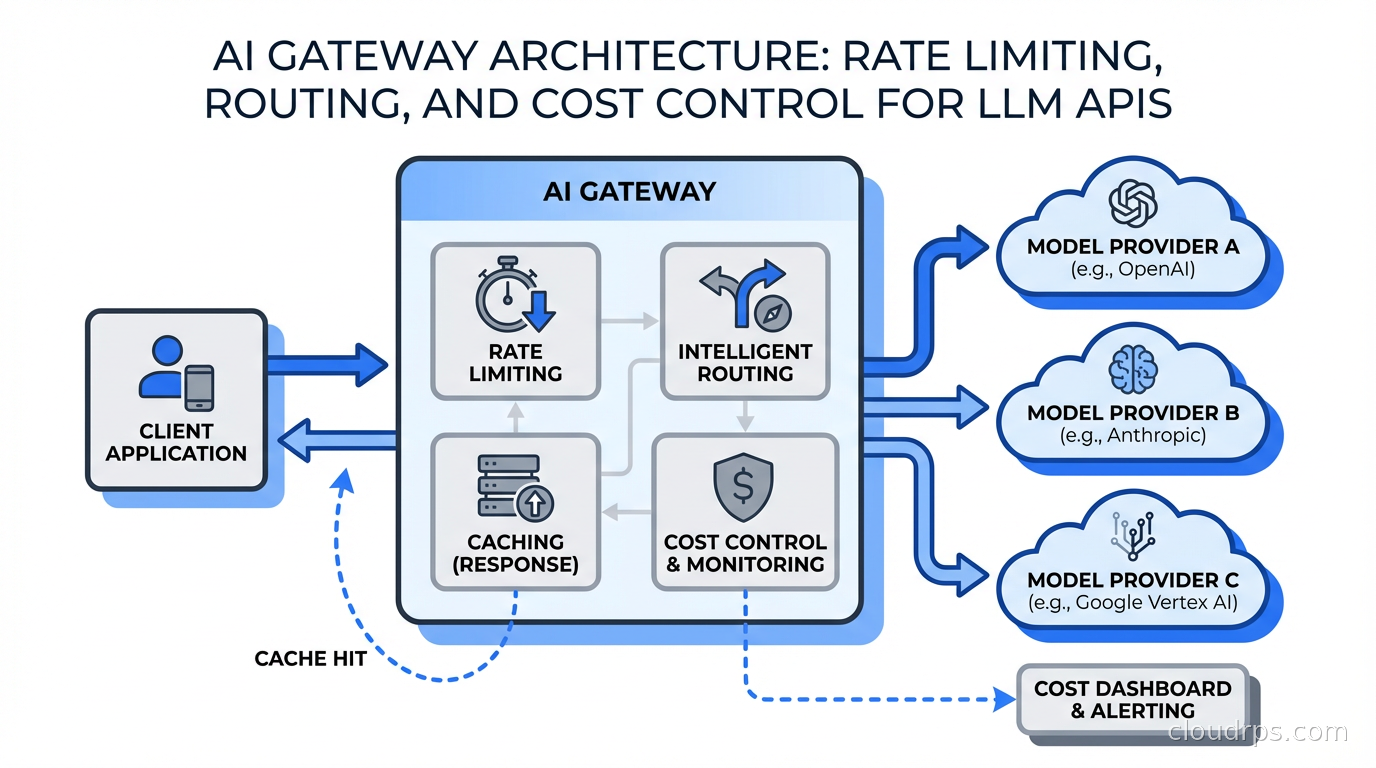

AI Gateway Architecture: Rate Limiting, Routing, and Cost Control for LLM APIs

A practical guide to building and operating AI gateways that sit in front of LLM APIs: semantic caching, model routing, rate limiting, cost attribution, and observability at scale.